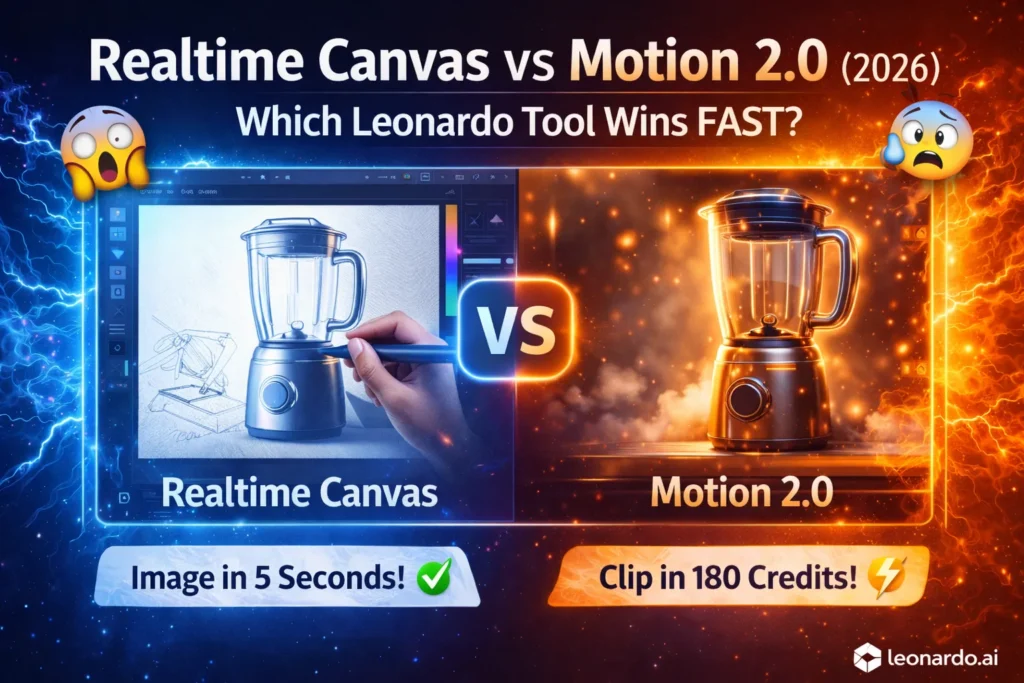

Realtime Canvas vs Motion 2.0 — The Hidden AI Workflow Most Creators Miss

Realtime Canvas vs Motion 2.0—tired of wasting hours and credits on the wrong creative tool? This guide shows which to choose, step-by-step workflows, pricing signals, and EU compliance tips to save time and avoid legal headaches. You’ll be surprised which tool routinely outperforms the other in real tests; short examples, prompts, and a quick decision checklist are included for faster approvals. I’ll be blunt: Realtime Canvas vs Motion 2.0. When I first tried both tools in 2025, I expected them to be interchangeable — “one does images, the other does video” — but that’s not the whole story.

Both tools sit inside the broader creative stack of Leonardo.ai, Realtime Canvas vs Motion 2.0, but they solve different problems with different technical trade-offs and workflow affordances. Over many tests — sketching product silhouettes, animating catalog images for social, pushing Motion 2.0’s frame interpolation, and iterating with Realtime Canvas’s inpaint brushes — I learned a few practical rules that save time and reduce client risk. I’ll share those in detail, along with the technical intuition (NLP and generative modeling vocabulary where it helps), Realtime Canvas vs Motion 2.0 real prompts that worked, and a clear “who should use which” verdict for European creators.

What Is the Real Difference Between Realtime Canvas and Motion 2.0?

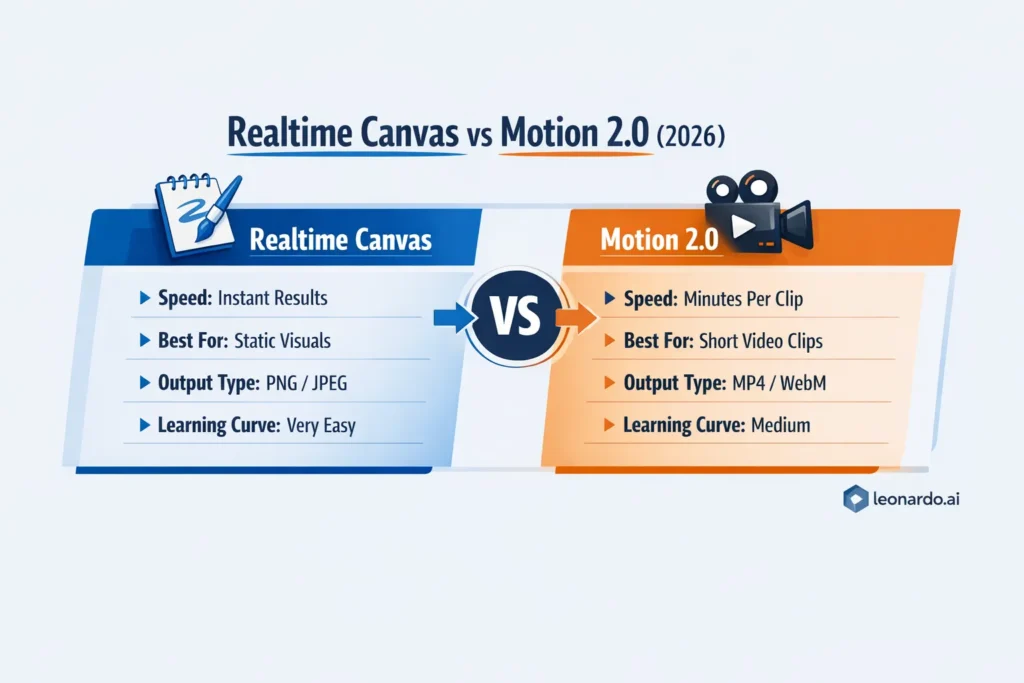

Realtime Canvas is your idea-to-artboard, low-friction sketch-to-image tool for fast prototyping and static outputs; Motion 2.0 is a short, high-cohesion text/image-to-video engine for punchy social clips. Combine them for the best results: design with Realtime Canvas, animate the winners with Motion 2.0. (More on tradeoffs and EU-specific legal cautions below.)

What these Tools Actually are

Realtime Canvas (what it feels like): You draw a crude silhouette or brushstroke, and the model fills in realistic textures, lighting, and detail in near real-time. Under the hood, it’s essentially a conditional image model that reacts to streaming user input (sketch strokes, masks, color fills) and uses on-the-fly conditioning and inpainting to update a latent representation of the canvas as you draw. The official help docs and product notes describe this near-instant transform as a core feature.

Motion 2.0 (what it does): Motion 2.0 is Leonardo.ai’s short text-to-video (and image-to-video) generation model that outputs short clips — typically a few seconds — with camera moves, object motion, and frame interpolation for temporal smoothness. It exposes motion controls, aspect ratio options, and interpolation toggles so you can tune temporal coherence and the “camera” behavior. Replicate, and Leonardo’s docs outline 5-second defaults, multiple aspect ratios, and fast/standard variants.

Quick technical glossary (so the rest reads faster):

- Latent space / latent representation: compact, compressed features the model manipulates instead of raw pixels.

- Diffusion/denoising steps: iterative process where noise is removed to reveal an image; sampling steps control fidelity vs speed.

- Conditional generation / prompt conditioning: how user text, masks, or sketches steer model outputs.

- Frame interpolation / temporal coherence: Algorithms that synthesize intermediate frames for smooth motion (used by Motion 2.0).

- Guidance scale / classifier-free guidance: A knob that balances between following the prompt strictly vs more creative freedom.

- Inpaint / mask-based editing: Replace pixels inside a mask while holding surrounding content steady.

Why this comparison matters for European creators

European creatives face unique demands in 2026: high standards for GDPR-style compliance, strict commercial usage expectations, local VAT and invoices, and widely varying format/presentation norms across channels (9:16 for Reels, 1:1 for Instagram feeds, widescreen for product pages). Choosing the wrong tool can mean wasted credits, legal headaches, or deliverables that don’t match platform norms. The platform’s official pricing and API documentation are worth checking before production runs.

Feature snapshot

To avoid a boring feature grid, here’s a short narrative contrast:

- Realtime Canvas: rapid, iterative, tactile. You sketch; the model updates. It’s ideal for quickly iterating product silhouettes, UI mockups, or creative concept Art. The tool emphasizes interactivity — refinements are on-canvas and reactive.

- Motion 2.0: generative temporal design. You provide a prompt or static image, and Motion 2.0 predicts how that scene should evolve over short time spans. Emphasis is on temporal consistency and style continuity across frames, not on long-form editing or timeline control. It produces short clips (often ~5s), and the API/docs explain options for resolution and frame interpolation.

How they differ technically

- Conditioning mechanism

- Realtime Canvas uses interactive conditioning — as you add strokes, the model reconditions on new masks and stroke vectors and updates the latent state. Practically, that means small, immediate feedback loops that designers love.

- Motion 2.0 uses sequence conditioning across time: a prompt (and optionally a source image) seeds a temporal latent that the model decodes into a sequence of frames, with frame interpolation smoothing transitions.

- Temporal vs static objectives

- Realtime Canvas optimizes per-frame realism and local coherence (sharp details, plausible textures). Motion 2.0 optimizes temporal coherence (motion trajectories, camera easing), which requires explicit frame interpolation and motion priors.

- Sampling and latency

- Realtime Canvas sacrifices some high-fidelity sampling steps in favor of low latency; it’s a “fast sampling” regime with fewer denoising iterations. Motion 2.0 often uses more sampling per frame (or special interpolation passes), so the runtime per clip is longer.

- Editability

- Realtime Canvas supports direct inpainting and iterative editing. Motion 2.0 allows re-renders and parameter tweaks, but fine-grained frame-by-frame retouches are more limited — you often need to re-render or use an external editor to composite.

- Guidance & prompt design

- Prompts for Realtime Canvas are often short structural cues augmented by sketching (shape: “clean commercial catalog silhouette”), while Motion 2.0 prompts are temporal directives (“5s cinematic dolly in; soft rim light; product reveal”) plus optional staging metadata (aspect ratio, camera yaw/pitch).

Practical workflows I use

Below, I give three real workflows I ran while testing. These are hands-on sequences that produced repeatable results.

Workflow A — Rapid product ideation (Realtime Canvas → Motion 2.0)

- Sketch a clean silhouette in Realtime Canvas (fill shape with base color).

- Add a short prompt: “clean catalog product shot, white background, soft shadow, 3/4 angle.”

- Iterate until composition is good; up-scale/export PNG.

- Import PNG into Motion 2.0; prompt: “5s slow dolly in, smooth camera tilt to reveal product, soft rim lighting.”

- Generate 3 variants with different aspect ratios (1:1, 4:5, 9:16). Tweak interpolation/hardness if needed.

- Trim and color grade in an external editor.

Why this works: Realtime Canvas gives fast creative exploration; Motion 2.0 turns the best candidate into a short social clip. I used exactly this flow for a 6-asset client test, and it saved ~30–40% time compared with doing static renders then manually keyframing.

(Documentation for generating videos from images with Motion 2.0 shows this image → video pathway is supported by Leonardo’s guides.)

Workflow B — Quick social promo (image-to-video only)

- Pick an existing product photo.

- Prompt Motion 2.0 directly: “3–5s vignette, parallax layers, gentle zoom out, cinematic LUT look.”

- Use frameInterpolation: true or the Motion 2.0 Fast model for quicker iterations (docs show flags for Motion 2.0 Fast and resolution settings).

Workflow C — Storyboarding & shot planning

- Use Realtime Canvas to sketch 6 different thumbnail frames representing beats of a 30-second ad.

- Export the top 2 sketches and animate each as 5s clips in Motion 2.0.

- Stitch clips in an NLE, and add voiceover and music.

Real observations from my Tests

- I noticed that Realtime Canvas is embarrassingly fast for first-look approvals. I could sketch five different product angles in the time it takes to boot a conventional 3D render.

In real use, Motion 2.0 sometimes over-smooths motion if you push frame interpolation too hard — it prefers coherent, simple motion (dolly, tilt, parallax) over complex, chaotic action. - One thing that surprised me: combining an inpainted Realtime Canvas export with Motion 2.0 often produced better framing than starting in Motion 2.0 from a text prompt alone — the deterministic composition of a curated image helps the video model focus on motion generation.

Pricing & EU billing notes

Leonardo’s public pricing pages list tiered plans, token allowances, and special API credit structures. Motion 2.0 generation is typically billed differently (credits per video) versus image tokens for Realtime Canvas. If you’re in the EU, factor VAT into enterprise invoices and confirm API billing currency and tax handling through your Leonardo account before large runs. There are community breakdowns and third-party write-ups that show API credit starting tiers and conversion examples, but rates change — check the official pricing page before budget sign-off.

Legal, compliance, and IP: what I tell clients

- Read the platform TOS: commercial use rights, model licenses, and attribution rules are in the official docs. Leonardo provides help articles and an Intercom for support — don’t skip them.

- For EU clients: ensure you document provenance (prompts, seeds, source images) and store client-approved prompt + output snapshots. This helps in case of later IP disputes or audit requests.

- For sensitive brands: avoid using client logo assets without an NDA during early experiments; always move to a private/team plan or API that includes guaranteed data privacy options.

Prompt recipes that worked

Realtime Canvas — clean catalog product

Sketch + Prompt:

“product silhouette: clean commercial catalog, soft shadow, neutral studio background, photoreal, 50mm lens perspective, cool color palette.”

Technique: sketch outlines, fill base color for object, let Canvas refine, then inpaint for labels.

Motion 2.0 — product reveal (image → 5s video)

Image + Prompt:

“5s cinematic product reveal, slow dolly in (0–30%), smooth camera tilt (−5° to +5°), soft rim light, shallow depth, slight parallax, final frame hold 0.5s”

Settings: resolution 720p or 480p for faster runs; try Motion 2.0 Fast for quick previews.

(Leonardo docs describe RESOLUTION_720 and model flags for Motion 2.0 Fast.)

One honest limitation

Motion 2.0 is spectacular for short, controlled motion — but it’s not a substitute for a timeline-based editor when you need precise per-frame control, multi-layer compositing, or long-form storytelling. If your work relies on frame-accurate VFX or bespoke character rigs, Motion 2.0 will be a time-saver for concept clips and promos — not the whole production pipeline.

Who this is best for — and who should probably avoid it

Best for:

- Freelance designers and small agencies need fast social content (thumbnails → short clips).

- Marketers who want quick social promos without hiring a full editor.

- Product teams that need concept imagery and short motion teasers for pitch decks.

- Developers prototyping visual UIs and animation concepts via API.

Should avoid/be cautious:

- High-end film teams needing timeline editing and motion-picture-grade VFX.

- Projects that require full legal certainty without checking the platform TOS (especially with copyrighted input material).

- Large batch production without a clear budget model — video credits add up.

Practical mistakes to avoid

- Don’t ignore aspect ratio until after rendering video — you may lose important framing.

- Avoid expecting long films from Motion 2.0; design short beats and stitch externally.

- Don’t push interpolation to the extreme if you want crisp, staccato motion — it smooths aggressively.

- If you want consistent product color across images and clips, export color-calibrated masters from Realtime Canvas and use those in Motion 2.0

Sample case study (mini): e-commerce catalog + social campaign

Client: small D2C company launching a new speaker.

Goal: 10 product images, 3 short social clips (5s each), 1 hero motion for homepage.

What I did:

- Realtime Canvas — sketched 12 concepts, iterated to 10 final images with different backgrounds and lighting. Quick approvals from the client.

- Motion 2.0 — took the 3 best images and generated three 5s clips (product reveal, three-quarter rotate, parallax close-up).

- Final stitching in Premiere for hero montage and consistent color grade.

Result: campaign delivered in 4 days vs estimated 9–10 days if we’d done full studio photography + manual keyframing. Budget-wise, credits for Motion 2.0 were material but still cheaper than renting studio time and assistants.

FAQs

A1: Realtime Canvas creates static images in near-real time from sketches and on-canvas interaction; Motion 2.0 generates short animated videos with temporal consistency and camera moves.

A2: Yes — export the PNG/JPEG from Realtime Canvas and import it to Motion 2.0 as a start frame or reference. The docs include recipes for image → video workflows.

A3: Generally, static images from Realtime Canvas cost fewer tokens/credits per output. Motion 2.0 incurs video credits per render and is therefore more expensive at scale. Check current pricing before large batches.

A4: Paid plans and API access unlock higher resolution, faster queues, and more generous monthly allowances. Many production runs will benefit from paid tiers or API credits.

A5: Yes — always confirm license terms and check EU rules for content and data usage. Keep prompt provenance and client approvals on file.

Short pro tips

- Fill areas with flat color before refining in Realtime Canvas — it helps the model read your intent.

- Use Motion 2.0 Fast for preview passes; then re-run with standard Motion 2.0 for higher fidelity.

- Keep prompts for motion concise: describe movement in verbs and style in adjectives (e.g., “slow dolly in; cinematic; soft rim light”).

Real Experience/Takeaway

I used both tools across multiple client projects. For initial concepting, Realtime Canvas is a genuine time saver — the instant feedback loop accelerates creativity and approvals. Motion 2.0 turns those concepts into attention-grabbing short clips, but you’ll want an editor to finalize deliverables for complex campaigns. My practical takeaway: use Realtime Canvas to rapidly narrow options, then spend video credits on Motion 2.0 only for the finalists — this hybrid workflow gave me the best balance of speed, cost, and polish.

One limitation

In long production pipelines that require nuanced per-frame VFX and sophisticated multi-layer compositing, neither tool replaces a dedicated VFX pipeline. They excel at ideation, short social clips, and product motion teasers — not feature films.

Final Verdict — Which Tool Should You Use in 2026?

- If you need fast prototyping and static deliverables → Realtime Canvas.

- If you need short, polished animated clips for social or promos → Motion 2.0.

If you need both → sketch with Realtime Canvas, animate the winners in Motion 2.0.

This combo saved me time and prevented unnecessary video credit spend when used judiciously.