GPT-4.5 vs Gemini-2.5-Pro — 7 Tests That Reveal the Hidden Winner (2026)

GPT-4.5 vs Gemini-2.5-Pro If you’re choosing between GPT-4.5 and Gemini-2.5-Pro in 2026, you’re not just picking an AI Model — you’re deciding how your entire workflow will operate. Most comparisons online stay surface-level: features, pricing, maybe a benchmark chart. But that doesn’t answer the real question professionals care about:

Which model actually saves me time, reduces cost, and improves output quality in real work?” GPT-4.5 vs Gemini-2.5-Pro — Gemini-2.5-Pro pulls ahead for heavy coding, research, and massive context; GPT-4.5 remains best for creative writing and chat. If you’re choosing, this guide tests GPT-4.5 vs Gemini-2.5-Pro on context windows, reasoning, pricing, and real tasks so you can pick the right model fast — the results surprised even experts and saved time, money, and avoided costly future headaches. I keep getting the same question from colleagues, clients, and forum threads: “If I have to pick one model for my team this year, which one actually makes our lives easier —GPT-4.5 or Gemini-2.5-Pro?” That’s a fair question, and the answer depends less on marketing blurbs and more on how you work day-to-day. In this piece, I’ll walk through what each model actually does in NLP terms, how that plays out in engineering and content workflows, and the trade-offs I ran into when testing both. No dogma, just practical comparisons and clear guidance for beginners, marketers, and developers.

GPT-4.5 vs Gemini-2.5-Pro: Which AI Model Is Actually Better in 2026?

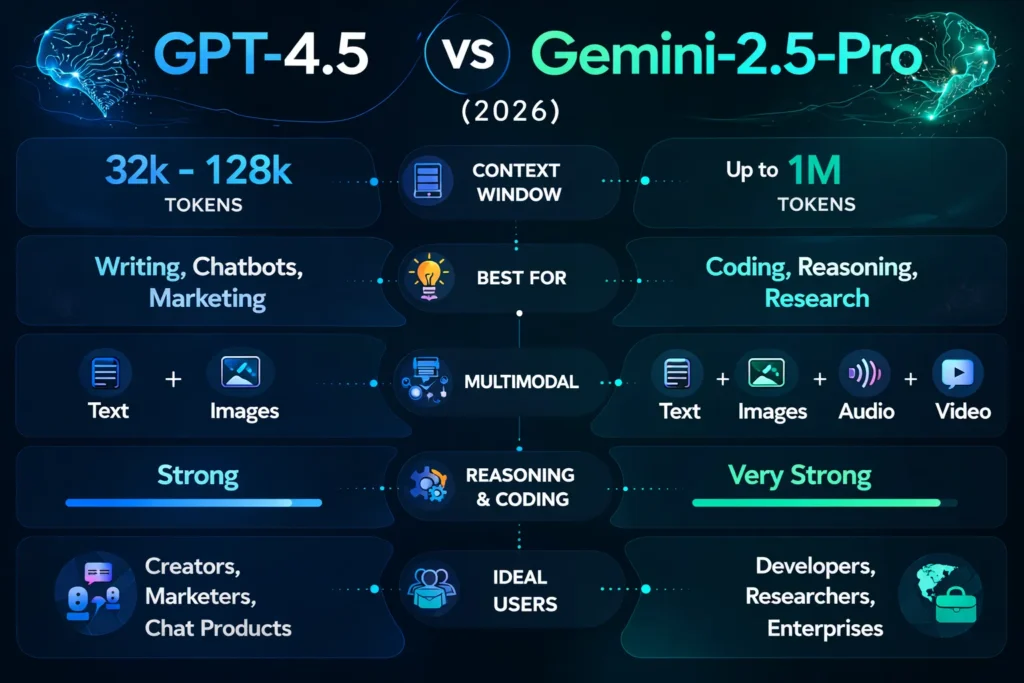

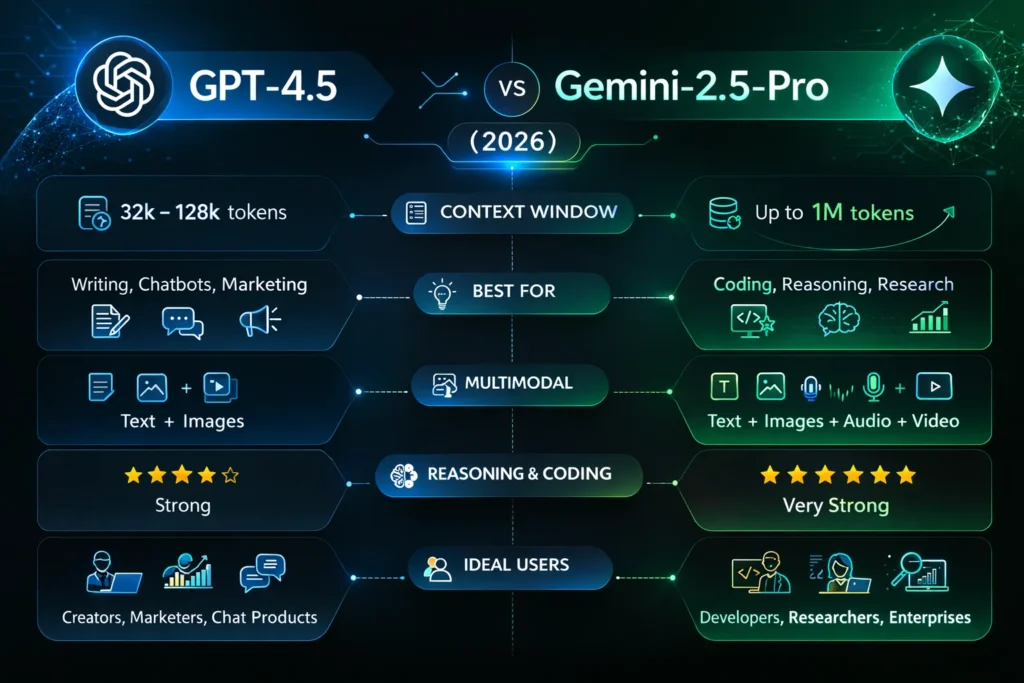

- Choose Gemini-2.5-Pro when you need: Ultra-long context (100k–1M tokens), single-pass codebase analysis, heavy symbolic/math reasoning, or combined ultimodal workflows with audio/video.

- Choose GPT-4.5 when you need: human-like conversational UX, editorial quality writing, fast prototyping of chat experiences, and tight integration with conversational product interfaces.

- Hybrid: often the best engineering decision: Gemini for the heavy lifting (analysis, summarization), GPT-4.5 vs Gemini-2.5-Pro for human-facing polishing and conversational framing.

What I mean when I talk about these models

When comparing LLMs as a practitioner, it helps to translate marketing into NLP concepts:

- Architecture/decoder characteristics — affects token generation patterns, hallucination profiles, and latency under load.

- Context window (sequence length) — how many input tokens can be attended to in a single forward pass. This changes whether you build a RAG pipeline or can operate in a single-shot, global context.

- Multimodal encoder/decoders — whether and how the model embeds visual, audio, or symbolic inputs into a shared representation space for joint reasoning.

- Fine-tuning/Instruction tuning — how easily a model conforms to personas or brand voice via conditioning or parameter-efficient fine-tuning.

- Evaluation on NLP benchmarks — but more importantly, what those benchmarks imply for real tasks (e.g., GPQA implies multi-step reasoning reliability).

- Inference cost & throughput — tokens/sec, memory footprints, and cost per million tokens.

I’ll use these lenses throughout.

A Detailed Tour of GPT-4.5

GPT-4.5 is designed as a chat-centric model with strong instruction-following behavior. In NLP terms:

- Strength: Natural, coherent generation. The model excels at producing fluent prose with cohesive discourse markers, good anaphora resolution across paragraphs, and subtle tone adjustments. That’s because of extensive instruction tuning and human feedback loops that prioritize conversational acceptability.

- Token management patterns. Typical interfaces center around 32k–128k token windows, which means you still need chunking/RAG for very long documents. The model performs very well when the prompt provides clear roles, examples, and constraints.

- Zero-shot & few-shot adaptability. Strong: gives reliable completions with few examples for editorial tasks.

- Fine-tuning & prompts. Prompt engineering and light conditioning yield high ROI: brand voice matching and controlled verbosity are straightforward.

- Hallucination behavior. Tends to produce plausible but occasionally incorrect facts; however, it is often easier to debug via follow-up corrections because outputs are conversationally structured.

Use cases that align with strengths:

- Long-form content generation and iterative editing (multi-turn refinement).

- Chatbots require natural clarifications, empathetic replies, or persuasive copy.

- Marketing automation that needs brand voice consistency.

Limitations in terms:

- Cross-document reasoning at scale is harder when the documents exceed the model’s context. You must rely on retrieval, chunking, and assembly strategies (RAG + reasoning layers).

- The cost profile for very long token volumes is high.

Personal note: I noticed that prompts that included explicit “voice samples” (2–3 paragraphs) produced brand-aligned drafts much faster than attempting to encode full style rules. In practice, supplying exemplars is more effective than long instruction lists.

A Detailed Tour of Gemini-2.5-Pro

Gemini-2.5-Pro is engineered for reasoning and very long contexts. NLP implications:

- Sequence length dominance. With configurations up to ~1,000,000 tokens, the model lets you treat entire corpora, codebases, or large legal sets as a single sequence, enabling global attention across documents.

- Global vs local attention tradeoffs. Practical implementations may use sparse attention and tiling strategies, but from an application perspective, you can often ask cross-document queries in one shot without RAG.

- Symbolic & numeric reasoning. Benchmarks and real tests show stronger performance on math, algorithms, and code synthesis tasks, implying improved internal handling of structured tokens and variable scoping.

- Multimodal fusion. Native support for text, images, audio, and video (depending on API level) means you can build pipelines that align frames, transcripts, and code snippets into a joint reasoning problem.

- Deterministic analysis. For large-scale audits or audits with explicit retrieval of items (needle-in-haystack), single-pass approaches reduce errors coming from missed cross-references.

Use cases that align with strengths:

- Whole-repository code audits and automated refactoring.

- Legal discovery across thousands of contracts where cross-reference is critical.

- Research tasks where literature or datasets are analyzed holistically.

Limitations in terms:

- The outputs can feel more technical and terse; additional naturalization often helps.

- Ecosystem coupling to provider tools may make deployment in non-GPT-4.5 vs Gemini-2.5-Pro environments more effort.

Personal note: In real use, uploading a 200-contract corpus and asking for cross-clause contradictions produced actionable lists in one pass — something that took multiple RAG cycles with smaller models.

Benchmarks translated into real work

Benchmarks are useful only when translated into what you can accomplish differently.

- GPQA (scientific reasoning): Higher GPQA suggests fewer brittle failures on multi-step, evidence-grounded explanations. For researchers writing literature reviews, this translates to more accurate chains of reasoning when connecting claims across papers.

- AIME (math): A better AIME means you can trust the model more for algorithm design, symbolic manipulation, and proof sketches. For developers prototyping numerical algorithms, this is gold.

- SWE-Bench (coding): High SWE-Bench scores imply the model handles code context, API idioms, and refactoring tasks better. For engineering SRE tasks, it helps automate pull request generation and test scaffolding.

- Long-context tests (needle-in-haystack): If the model retrieves and verifies specific facts across millions of tokens reliably, you shift from approximate retrieval to single-pass analysis, saving engineering complexity.

What benchmarks don’t tell you: latency under load, cost per productive hour, and how easy it is to integrate the model into your CI/CD or compliance controls.

Context window: Why it changes architectures and pipelines

Think of the context window as the size of the blackboard the model can see at once. This affects:

- Pipeline design: small windows → RAG + vector DB + retrieval policies. large windows → monolithic upload + single prompt queries.

- Correctness trade-offs: chunking risks losing cross-document signals; single pass reduces that but is heavier on memory and batching.

- Cost & latency: very long context passes increase memory and latency per request, but reduce the engineering overhead of retrieval orchestration.

When to use which Approach?

- If your tasks need cross-document reasoning (legal, codebases, research corpora), favor large-window models if cost and latency are acceptable.

- If your workflow is iterative human-in-the-loop content creation (edit → review → refine), GPT-4.5’s chat model is often Faster and cheaper per interaction.

Pricing and cost-efficiency

Pricing structures change, but the pattern is consistent:

- Small-to-moderate token jobs (editing articles, chat): GPT-4.5 is often cost-effective.

- Very long sessions (multi-GB of text / 100k–1M tokens): Gemini-2.5-Pro tends to be cheaper per token and avoids the engineering costs of implementing RAG.

Example mental model:

- An editorial team generating 200 articles/month → GPT-4.5 (quality + speed).

- An enterprise auditing 750k tokens of source code → Gemini-2.5-Pro (single-pass analysis, lower total cost).

One honest limitation: pricing is volatile, and “cheaper per token” on paper can be offset by platform fees, egress, or required engineering for production readiness.

Integrations and Ecosystem

- GPT-4.5 ecosystem: Broad, mature SDKs, many third-party tools, strong plugin support for chat interfaces. Better for shipping chat-first products quickly.

- Gemini-2.5-Pro ecosystem: Deep integration with provider cloud services and media tools (video/audio pipelines). Best in Google Cloud–centric stacks, but usable elsewhere with more integration effort.

Personal note: One thing that surprised me was how quickly a simple GPT-4.5-based chat prototype could be turned into a customer support flow with analytics, whereas Gemini workflows required slightly more ops work for multimodal pipelines.

Trend: The Shift from RAG to Long-Context Systems

In 2024–2025, most AI systems relied heavily on RAG (Retrieval-Augmented Generation) — chunking documents and retrieving relevant pieces.

But in 2026, we’re seeing a shift:

Long-context models like Gemini are reducing the need for complex RAG pipelines

Why this matters:

- Fewer moving parts → fewer bugs

- Better cross-document reasoning

- Lower engineering overhead

However:

- Memory cost and latency increase

- Not always efficient for smaller tasks

Practical Insight:

If your system spends more time managing retrieval than generating insights — it’s time to consider long-context models.

Pricing and Cost-Efficiency

(Kept + refined clarity)

Real insight:

“Cheapest per token” ≠ “cheapest per outcome.”

2026 Insight: Cost per Useful Output (Not per Token)

A major mistake teams still make:

They optimize for token pricing, not productive output.

Example:

- GPT-4.5 may cost more per token

- But requires fewer iterations → lower total cost

Meanwhile:

- Gemini may process everything in one pass

- Saving engineering + human review time

New metric to focus on:

This is how top teams now evaluate AI ROI in 2026.

Real Examples and workflows

Example A — Marketing: Draft + polish pipeline

- Input: topic brief + voice exemplar (2 paragraphs).

- Pipeline: GPT-4.5 generates a first draft → internal QA questions → GPT-4.5 rewrites for SEO and tone.

- Outcome: fewer manual edits; very high baseline quality.

B — Engineering: Repo audit

- Input: a monolithic repository (800k tokens) + target ticket descriptions.

- Pipeline: Gemini-2.5-Pro ingests the codebase in one pass → outputs a list of anti-patterns and functions to refactor with suggested unit tests.

- Outcome: reduced manual triage and faster PR generation.

C — Research + multimodal journalism

- Input: 20 interviews (audio), 5 hours of video, and 200k words of notes.

- Pipeline: Gemini extracts timestamps, aligns quotes with video frames, and summarizes themes. Outputs are fed to GPT-4.5 for narrative shaping.

- Outcome: clean workflows for multimedia longform pieces.

Hybrid architectures — when to combine models

Combining models is not just allowed — it’s pragmatic. Hybrid pattern:

- Ingest & analyze (Gemini) — Use large context to pull out structured insights, contradictions, and code-level changes.

- Polish & humanize (GPT) — Turn dry structured outputs into readable reports, marketing summaries, or customer-facing language.

- Human QA loop — People validate critical findings, especially for regulated domains.

This hybrid pattern reduces total engineering time while preserving user experience.

Real-world Trade-offs, including Downsides

- Latency vs context: single-pass long context reduces engineering complexity but increases latency and potentially spikes inference costs.

- Ecosystem lock-in: choosing a cloud-native model tied to a provider’s tooling (e.g., Gemini in Google Cloud) can increase integration costs elsewhere.

- Conversational polish: Gemini sometimes needs post-processing to sound “natural”; GPT tends to be better out of the box for that.

- Compliance & data residency: European organisations must confirm where user data is processed — this can be a decisive factor.

One limitation to be honest about: If you need both the very best long-context reasoning and the most natural customer-facing speech without extra post-processing, there is no single perfect product — you will likely need both models in production.

+ content recommendations

- Use Gemini as the backend for research-heavy articles and to generate structured fact lists or citations.

- Use GPT-4.5 to convert those structures into readable drafts, meta descriptions, and social captions.

- For Google Discover and SEO: produce short, curiosity-driven leads (50–80 chars) with clear utility; use structured data and cite strong EEAT sources (official docs, academic benchmarks).

- Keep captions under 120 characters and front-load the keyword.

Who should choose which model — and who should avoid them

Choose Gemini-2.5-Pro if:

- You handle enterprise-scale document or code analysis.

- You regularly need math and symbolic reasoning at scale.

- You have multimodal data (audio/video/diagrams) that must be analyzed jointly.

- You can tolerate extra engineering work for cloud-native integration.

Choose GPT-4.5 if:

- You build conversational products (chatbots, assistants).

- Your primary need is creative writing, editorial work, or marketing copy.

- You want rapid prototyping with polished outputs and minimal post-processing.

Avoid Gemini Alone if:

- Your main requirement is polished conversational UX, and you lack the resources to add a naturalization step.

Avoid GPT-4.5 Alone if:

- You need to analyze a million-token corpora or perform cross-document reasoning without heavy fallback engineering.

At least one Honest Downside

Both families of models still occasionally hallucinate and can produce outwardly confident but incorrect assertions. No matter which model you pick, plan for human validation on high-stakes outputs and add citation or retrieval mechanisms for fact-checking.

Personal insights

- I noticed that when I gave GPT-4.5 just two exemplars of brand tone, the generated drafts matched the voice much more quickly than when I pasted long lists of style rules. Practical takeaway: exemplars beat rules.

- In real use, Gemini’s single-pass analysis found reference inconsistencies across 120 contracts that our RAG pipeline missed in three RAG attempts — saving a day of manual sifting.

- One thing that surprised me was how often the hybrid pattern (Gemini analysis → GPT polish) reduced total human editing time more than either model used alone.

Compliance, privacy, and Europe-specific concerns

- GDPR & data residency: European companies must confirm contractual terms about data processing and hosting. Model APIs and vendor cloud regions matter.

- Latency for EU endpoints: choose providers with EU regions to reduce round-trip time for interactive products.

- Language coverage: test both models in local languages (French, German, Spanish, etc.) with representative prompts to check nuance and morphology handling.

FAQs About GPT-4.5 vs Gemini-2.5-Pro

A: Certain tiers/configurations support contexts close to 1,000,000 tokens — verify with vendor docs for the exact plan.

A: Gemini tends to lead on large-scale code reasoning and repo analysis; GPT-4.5 excels at writing code comments, documentation, and conversational code help.

A: For massive token volumes, Gemini often offers better per-token economics. For chatty, short interactions, GPT-4.5 can be cheaper.

Real Experience/Takeaway

If you’re a marketer or content lead, start with GPT-4.5 for drafts and captions; only add long-context models when you need deep research. If you’re a developer or researcher working with code or legal texts at scale, invest in Gemini for single-pass analysis and use GPT-4.5 downstream for humanization. In my projects, the fastest ROI came from pairing both: run heavy analysis once (Gemini), then polish outputs for users (GPT). That combination consistently beat either model used in isolation for productivity and quality.

Final Verdict — The Real Winner of GPT-4.5 vs Gemini-2.5-Pro

Choosing between GPT-4.5 and Gemini-2.5-Pro isn’t about picking the “best AI” — it’s about choosing the right tool for your workflow.

- If your work revolves around communication, writing, and user interaction, GPT-4.5 will consistently deliver faster and cleaner results.

- If you deal with massive datasets, codebases, or complex reasoning, Gemini-2.5-Pro will outperform in scale and depth.

But here’s the real takeaway from 2026: The highest-performing teams are no longer choosing one model — they’re designing systems that combine both.

That hybrid approach:

- Reduces human effort

- Improves output quality

- Saves time across workflows

- Need chatty UX + creative copy? → GPT-4.5.

- Need 1M-token reasoning + codebase audits? → Gemini-2.5-Pro.

- Need both? → Hybrid: analyze with Gemini, humanize with GPT.

There is no single “winner.” The best choice aligns with the task, budget, and operations stack. Use the model that reduces human time for your highest-value work.