Introduction

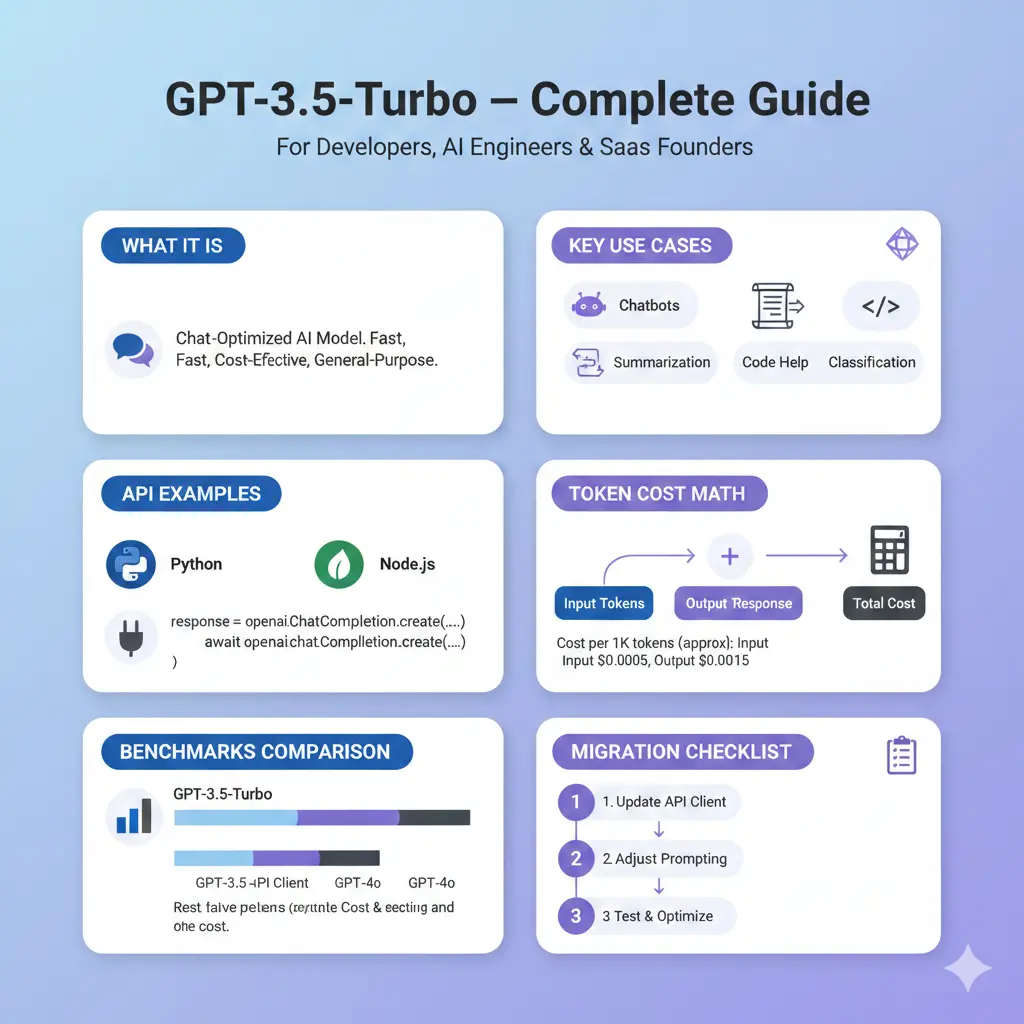

From an engineering perspective, GPT-3.5-Turbo is a chat-optimized transformer family that maps sequences of input tokens to distributions over next tokens using a large pre-trained autoregressive model. It was widely adopted because it balances inferential capability with latency and cost, making it a practical choice for short-to-medium-length conversational tasks, classification, and concise generation. Its predictable behavior and established prompt recipes make it a strong baseline for production systems where latency, throughput, and deterministic behavior matter. In spite of the rapid release of newer model families, GPT-3.5-Turbo remains available and helpful for many engineering scenarios—especially where conversion costs are high. Ever confirm the exact variant and pricing for your account before final opinion?

What GPT-3.5-Turbo Migration

- Architecture family: Autoregressive transformer, chat-optimized interface (system + user + assistant roles).

- Main strengths: Rapid inference for short-to-moderate strings, reproducible behavior for tuned, great at instruction-following tasks, and sample code generation.

- Block: Less context window compared with modern large-context models; less capable at multi-hop thinking and multimodal inputs compared with newer models that natively knob vision/audio.

- Common help: Front-line chatbots, classifier/regression pipelines, summarization microservices, poor code-patching assistants, and routing/triage chip.

Engineering Checklist GPT-3.5-Turbo When Using the API:

- Timeout and retry policies (use jittered exponential backoff).

- Input sanitization (remove PII unless necessary and properly consented).

- Output schema validation (use JSON schema / strict parsing).

- Token logging for cost attribution (log counts but not PII).

- Rate-limiting and concurrency controls to manage quotas and avoid surges.

Practical Tips to Reduce Token Spend :

- Use retrieval-augmented generation (RAG) with chunking & embeddings so you only send relevant context.

- Compress long contexts with extractive summarizers before sending.

- Use deterministic truncation strategies with clear fallback prompts.

- Reduce temperature and enforce strict schemas to avoid verbose outputs.

- Use streaming and smaller max_tokens if incremental output is acceptable.

GPT-3.5-Turbo Limitations & Failure Modes

Common pitfalls:

- Hallucinations (invented facts). Mitigation: RAG, response validation, and external verification.

- Context window overflow. Mitigation: chunking + prioritized retrieval; use models with larger context windows for very long docs.

- Output format drift. Mitigation: JSON schema enforcement, automatic validators.

- Tokenization surprises. Mitigation: measure tokens using the tokenizer library for representative prompts.

- Latency spikes. Mitigation: batching, caching frequently-requested completions, warmup calls for cold-start models.

Diagnostics to run when outputs are wrong:

- Compare the same prompt across temperatures (0.0, 0.2, 0.5).

- Re-run with stronger system prompt (“You MUST only output …”).

- Test with few-shot examples vs zero-shot to measure sensitivity.

- Tokenize prompt+output and inspect for inadvertent trimming or tokenization inefficiencies.

Benchmarks & Decision Matrix

When to keep GPT-3.5-Turbo

- Low switching cost and high stability in production.

- Use cases with short context and deterministic instruction-following needs.

- Existing prompt investments or pinned snapshots.

When to evaluate/move to GPT-4o mini / GPT-4 / GPT-5

- Need for improved reasoning, larger context windows, and multimodality.

- When the newer family reduces the total cost-per-task after re-tokenizing flows.

- When improved accuracy in downstream metrics (e.g., fewer hallucinations) justifies migration costs. Note: OpenAI announced GPT-4o mini as cheaper in many workloads; use the latest pricing page to compare.

Simplified Decision Matrix

| Requirement | Keep GPT-3.5-Turbo | Try GPT-4o mini / GPT-4 / GPT-5 |

| Low-cost simple chat | ✅ if already tuned | ✅ often cheaper now (verify) |

| Complex multi-hop reasoning | ❌ | ✅ |

| Very long docs / 100k+ tokens | ❌ | ✅ |

| Multimodal (image/audio) | ❌ | ✅ |

Benchmark Methodology

- Select a representative dataset (real user prompts + edge cases).

- For each model candidate, run the exact prompts and record: tokens (in/out), latency p50/p95, accuracy (task-specific metric), hallucination rate (human or automated), & cost.

- Re-tokenize flows to estimate the final cost per call.

- Break statistical tests on quality metrics.

- Race a short A/B in production (1–5% traffic) with monitoring on retention, error rates, and support growth.

Historical Pricing Snapshot & Note

OpenAI’s public pages previously listed GPT-4o mini at $0.15 per 1M input tokens and $0.60 per 1M output tokens, which many teams used for comparative calculations—always confirm real-time prices for your account.

Transfer checklist

This checklist is your playbook when moving models from GPT-3.5-Turbo to a competitor model.

Prep

- Capture baseline metrics: latency (p95), token spend, error rates, and human-rated accuracy.

- Freeze a snapshot of current prompts & training data to use as a canonical test set.

Local Testing

- Re-run canonical prompts on the candidate model(s) using identical settings.

- Re-tokenize prompts to measure token changes.

- Record latency and output differences.

Quality Evaluation

- Human-label a random sample to check correctness/hallucination.

- Measure downstream KPIs (task-specific).

- Update prompts iteratively (system message & few-shot examples).

A/B experiment

- Deploy the candidate as 1–5% of traffic.

- Monitor metrics: user satisfaction, error/rollback rate, API cost delta.

- Ensure rollback path: if metrics degrade, switch traffic back and analyze failures.

Rollout & ops

- Gradual ramp (5→25→50→100%).

- Observe long-tail cases and retrain prompt recipes.

- Reconfigure observability: new token counters, new model labels in logs, and updated costs in billing dashboards.

Troubleshooting & Production Hardening

Rate limiting & Backoff

- Use exponential backoff with jitter for 429 and server errors.

Circuit breaker for repeated failures.

Schema & Response Validation

- Use JSON schema libraries and reject invalid outputs.

- Keep a human-in-the-loop fallback for safety-critical outputs.

Observability

- Log token counts, prompt hashes (not raw PII), latencies, and error types.

- Alert on deviations in hallucination rates, latency p95, and token usage trends.

Safety & Moderation

- Pass user-facing outputs through a lightweight moderation filter (text + image if multimodal).

- Rate-limit or gate high-risk actions requiring model outputs.

Snapshot/Pinning for Reproducibility

- Use pinned model snapshots if available for reproducible behavior in critical systems.

Compression + Retrieval Hybrid

- Chunk size recommendations: ~1,000–1,500 tokens per chunk for semantic coherence.

- Overlap windows: 50–150 tokens for boundary safety.

- Retriever top-K: start with K=3; validate for recall/precision tradeoff.

- Compressor: run an extractive summary on retrieved chunks before the generative call to reduce token load.

Pricing Comparison Table GPT-3.5-Turbo

Important: prices change. Use your account pricing page for canonical numbers. Below are templates and example historical numbers; do not treat them as current without verifying.

| Model | Input cost (per 1M tokens) | Output cost (per 1M tokens) | Notes |

| GPT-3.5-Turbo (example) | See pricing page | See pricing page | Legacy chat model. Verify in the dashboard. |

| GPT-4o mini (announcement numbers) | $0.15 / 1M | $0.60 / 1M | Announced as cheaper and multimodal; verify live pricing. |

Pros & Cons GPT-3.5-Turbo

Pros

- Cost-effective for many chat tasks.

- Mature ecosystem of prompts and community knowledge.

- Predictable behavior for short context tasks.

Cons

- Newer models can be cheaper/more capable for many tasks (run benchmarks).

- Smaller context window than the largest modern models.

- Hallucination risk — validate critical facts.

FAQs GPT-3.5-Turbo

A: Not abruptly. OpenAI maintains GPT-3.5 variants, but the company also offers newer options and may evolve available backends over time. Check your OpenAI dashboard for the exact lineup available to your account.

A: A few GPT-3.5 family variants and snapshot options backing fine-tuning or snapshots. Check the official fine-tuning documentation for the specific model you are using.

A: Test first. GPT-4o mini was announced as cheaper and often more capable for certain tasks. Re-run representative prompts and compare token usage, accuracy, and latency on your dataset.

A: Enforce response schema validation, use retrieval-augmented generation with grounded source text, ask for citations, and programmatically verify facts for critical outputs.

Conclusion GPT-3.5-Turbo

GPT-3.5-Turbo remains a practical baseline for many NLP production systems where latency, determinism, and existing prompt investments matter. The model is correct for short-to-medium tasks such as chat assistants, transcript summarization, ticket routing, and short code patches. Yet, the model landscape evolves rapidly: newer families (GPT-4o mini, GPT-4, GPT-5) have introduced upgraded reasoning, multimodality, and in some cases lower per-task cost—so every team should benchmark bidder replacements against their model workloads. The right engineering approach is empirical: measure your current baseline, re-run representative test sets on candidates, re-tokenize flows for cost estimation, and A/B test in production with strong observability and rollback plans. Use schema enforcement, RAG, and robust SRE patterns to reduce hallucinations and optimize token spend. Always confirm live pricing on OpenAI’s pricing pages before making procurement decisions.