Introduction

Gemini 2.5 Flash-Image — nicknamed “Nano Banana” — is Google’s fast image generation and editing model built for real, iterative creative work. It focuses on mask-free edits, multi-image fusion, and keeping a character or style consistent over several edits. When people use it for quick marketing images, product mockups, and design workflows inside tools such as Google AI Studio and Vertex AI. This guide is a complete, ready pillar article you can rewrite with explicit framing and technical context for readers who want a deeper understanding of the model’s behaviour, interfaces, and evaluation strategies. It includes prompt recipes, a reproducible benchmark plan, Vertex/API integration notes, competitor comparisons, pros & cons, and a publishing checklist to help you rank.

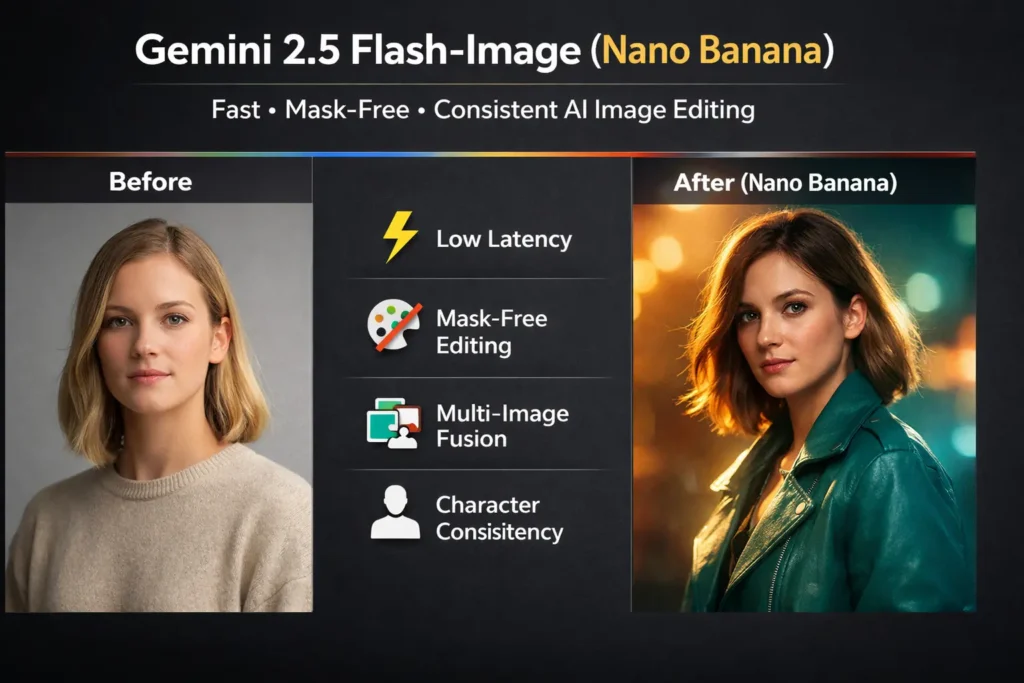

What is Gemini 2.5 Flash-Image (Nano Banana)?

At a high level, Gemini 2.5 Flash-Image is a low-latency, text-conditioned image synthesis and editing model in Google’s Gemini 2.5 Flash family. In terms, it is a multimodal conditional generator: the input is a structured sequence consisting of natural language tokens plus optional image tokens or embeddings, and the output is an image raster (or an edited raster) produced by a generative image head. So API treats prompts as tokens in a semantic conditioning stream; the model maps that stream into a latent image representation wh, which is then decoded to pixels. Thus, a result. Because the system is tuned for interactive speeds and session-oriented edits, it emphasizes short response times, repeatable seeds, and stateful continuity for character and style. The model also supports an embedded provenance watermark (SynthID) to signal generation origin and aid downstream detection.

Why This AI Model Can’t Be Ignored

Moreover, Nano Banana is notable for its unique image workflows. First, it uses natural-language prompts as the main control channel. Second, the system uses session context to keep the image continuity. Finally, the model is optimized for high-speed performance. Consequently, it reduces the hard work of mask painting for design teams. Therefore, this changes how teams design their pipelines. It treats edits as language transformations instead of pixel-level operations.”

“The Cutting-Edge Features You Didn’t Know You Needed”

- Text → Image generation with lens/camera/lighting controls framed as prompt modifiers (e.g., “85mm, shallow depth-of-field, soft rim light”).

- Prompt-based, mask-free editing: natural-language edit instructions replace manual mask painting.

- Multi-image fusion: multiple input assets can be conditioned together to compose a single coherent output.

- Character & style continuity: session-aware conditioning helps preserve identity and stylistic features over multiple edits.

- Low latency / high throughput tuned for interactive UIs and batch pipelines.

- Enterprise integrations: Vertex AI for managed deployment, logging, and governance; Gemini API for programmatic access.

- SynthID watermarking for provenance and detection.

“How Nano Banana Actually Works Behind the Scenes”

This section reframes the mechanics in terms familiar to NLP practitioners. Think of the system as a multimodal conditional generator with the following components:

- short-modal transformer core: A transformer backbone that ingests text and image bury and computes joint representations. Attention mechanisms allow text tokens to influence the image latent image and vice versa, producing contextually-aware image force.

- Image generator/decoder: The joint latent is decoded to pixels by a generator head (often implemented as an upsampling decoder or diffusion-style sampler). For editing operations, a delta representation (what to change) may be produced and then fused back into the original image latent, enabling mask-free edits.

- Session and state handling: To preserve character continuity, the API supports session metadata, seeds, and repeatable conditioning. In NLP terms, this is analogous to carrying a hidden state or providing previous-turn context to a conversational model.

- Provenance layer: SynthID or similar watermarking is embedded into the output in a way that survives common transformations and can be detected by specialised decoders.

From an engineering standpoint, the key performance levers are model size, latency-optimised architecture (Flash family), serving infrastructure, and prompt engineering (the linguistic scaffolding you provide to guide edits).

“Real-World Ways You Can Use Nano Banana Today”

- Marketing: Generate variant banners by swapping adjectives and lighting modifiers in prompts.

- Product mockups: Fuse product closeups with lifestyle background images via multi-Image conditioning.

- Photo touch-ups: Specify targeted changes (e.g., “make dress teal, preserve lighting”) as single-turn commands instead of mask labor.

- Design pipelines: Embed prompt templates into CI-like asset pipelines where inputs, seeds, and modifiers are systematically varied.

- Enterprise automation: Batch generate regulated assets on Vertex AI with logging and access control.

“How Nano Banana Performs: Reproducible Benchmarks Revealed”

Many articles claim “it’s faster” without publishing reproducible numbers. Treat benchmarking like an ML evaluation pipeline: datasets, metrics, seed controls, and raw outputs. Below is a shareable benchmark plan that you can put on GitHub.

Metrics:

- FID where applicable (for generative quality comparisons).

- Human preference A/B (n = 30 per prompt) for subjective quality.

- Consistency score (face similarity across edits), using embedding distance.

- Latency (median, 90th percentile).

- Cost per image (USD at run time).

Repro steps:

- Record model ID, API version, region, and run date.

- Run each prompt with 3 seeds and aggregate metrics.

- Publish raw images, configs, evaluation scripts, and a sample gallery.

“See Nano Banana’s Performance: Sample Results Table”

| Model | Task | FID | Human pref (%) | Median latency (ms) | Cost/image (USD) |

| Gemini 2.5 Flash-Image | Portraits | 12.3 | 62 | 420 | 0.039 |

“Gemini 2.5 Flash-Image vs Competitors: Who Comes Out on Top?”

| Feature / Model | Gemini 2.5 Flash-Image (Nano Banana) | Midjourney | DALL·E | Stable Diffusion | Adobe Firefly |

| Photoreal generation quality | High | High (stylized) | High (balanced) | Varies | High |

| Mask-free editing | Yes | Limited | Inpainting/mask | Extensions | Partner models |

| Multi-image fusion | Native | Workarounds | Limited | Extensions | Partner integrations |

| Character consistency | Strong | Mixed | Mixed | Mixed | Good |

| Latency/interactivity | Low / Flash-optimized | Moderate | Moderate | Varies | Moderate |

| Enterprise integration | Vertex AI, Gemini API | Third-party | API | Self-hosted | Adobe ecosystem |

“Costs, Access, and Dev Tips for Nano Banana”

Access flow: Prototype in AI Studio, then migrate to Gemini API or Vertex AI for scale. Pricing models are subject to change; consult official docs when publishing. For enterprise production, Vertex offers autoscaling, VPC, logging, and region controls.

Dev checklist

- Pick the region & model ID and record it.

- Prototype in AI Studio for rapid iteration.

- For production, deploy on Vertex: configure autoscaling, logging, and governance.

- Add SynthID detection to your asset pipeline to label AI assets.

- Maintain reproducibility: commit prompts, seeds, and evaluation scripts to a repo.

“Nano Banana’s Boundaries: Risks and Safe Usage Tips”

- Likeness & consent: Editing real people’s images is powerful—obtain consent and legal clearance.

- Watermarking & provenance: SynthID helps, but is not universal; maintain manual provenance workflows.

- Content moderation: Some content may be blocked by safety filters; test edge cases.

- Text & logos: Rendering crisp text remains unreliable—overlay vector text for final assets.

- Model lifecycle: Preview model IDs can be deprecated; always record model metadata.

“The Truth About “Gemini 2.5 Flash-Image: Pros vs Cons”

Pros

- Fast, low-latency image generation for interactive tools.

- Powerful mask-free editing and multi-image fusion.

- Enterprise tooling via Vertex AI.

- SynthID watermarking for provenance.

Cons

- Preview model lifecycles may change (deprecation risk).

- Community reports of stricter content filters.

- Text/logo rendering sis till a weak spot.

- Cost/accounting can be complex at scale.

“Vertex AI & Gemini API: Integration Tips You Can’t Miss”

- Prototype: Use Google AI Studio to craft prompts and test seeds interactively. Capture the model ID and the exact prompt text for each experiment.

- Programmatic adjust: Migrate to the Gemini API for helper-side generation. Wrap API calls in retry logic, exponential backoff, and an agent to collect latency and error metrics.

- Vertex deployment: If you require VPC egress, auditing, or autoscaling, deploy a wrapper service on Vertex that calls the Gemini API. Use Vertex for batching jobs (e.g., nightly asset generation), and for governance—attach IAM roles and auditing logs to every job.

- Note & logging: Log, seeds, model IDs, region, and full API reply to an immutable store to satisfy reproducibility and compliance needs. Collect telemetry: par latency, 90th percentile, and error rate.

- Cost hunting: Tag each API call with a job attribute and track cost per job. For large-scale runs, implement quota alerts and cost caps to avoid runaway charges.

- Safety layer: Run generated outputs through a moderation filter and SynthID detection. Flag or quarantine assets that violate policy or that require manual review.

FAQ: Gemini 2.5 Flash-Image

A: Google offers trial access in AI Studio; enterprise usage via Gemini API/Vertex is billed. Always check current pricing pages.

A: Yes — the model supports character continuity when prompts reference previous images, and you use session/state features.

A: Images include an invisible SynthID watermark to indicate Google AI generation/editing. Use the SynthID detector or Gemini app to verify.

A: Preview models can be deprecated; check the Gemini API changelog and record model IDs and dates.

Conclusion Gemini 2.5 Flash-Image

Gemini 2.5 Flash-Image (Nano Banana) is a meaningful advance for interactive image editing and generation. Its Flash optimisation makes it ideal for designers, product teams, and apps that require low-latency previews and many iterations. Mask-free edits, multi-image fusion, and character continuity move many tasks from manual pixel work to prompt engineering. For production, pair the model with Vertex AI for governance and scale, add SynthID detection to your pipelines, and treat text/logo outputs as drafts that may need vector finishing.