Introduction

Gemini 2.5 Pro is a high-capability, multimodal, large-context generative model from Google/DeepMind designed to operate as a scalable reasoning engine across long structured inputs and heterogeneous signal modalities. From an NLP systems perspective, the defining attribute is the ~1,048,576-token context window: this transforms many classic retrieval/augmented-generation patterns by allowing raw or lightly preprocessed long-form sequences (books, long transcripts, multi-file code snapshots) to be passed through a single model instance or tightly-coupled chunking pipeline. Architecturally, Gemini 2.5 Pro couples large transformer-style attention modules with multimodal encoders that align text, visual frames, audio spectrogram embeddings and document structure into a joint latent space — enabling cross-modal attention, timestamp-aware summarization, and structured JSON outputs that are amenable to programmatic validation.

This guide reframes product-level claims in NLP terms: tokenization and context management strategies, attention and memory mechanisms, evaluation metrics and reproducible benchmark recipes, model governance and safety testing, deployment integration (Vertex AI / AI Studio), cost/throughput tradeoffs, and concrete prompt + pipeline blueprints you can use to validate the model on real production tasks.

Gemini 2.5 Pro — Why Everyone’s Talking About This AI Secret

- Yes if your problem is fundamentally large-context reasoning (full-book summarization, cross-file code reasoning, long legal briefs) where local context windows (e.g., 8k–128k tokens) force heavy engineering and retrieval scaffolding.

- Yes if you need native multimodal fusion and timestamp-aware outputs (video + transcript → structured summary, audio → time-aligned annotation).

- Caveat: frontier models with expanded capabilities still require independent factuality evaluation, calibration tests, and robust validation pipelines before handling regulated or safety-critical domains.

Gemini 2.5 Pro — What’s Really Behind Its 1M‑Token Power?

- Context scale — a practical ~1M token input window that changes how retrieval and chunking are designed. This enables passing entire long documents or concatenated multimodal sequences without losing global attention scope.

- Modal breadth — native encoders for images, audio, video, and PDFs that map non-text inputs into modality-specific embeddings and then into a shared latent space for cross-attention and joint decoding.

Key Implications:

- Tokenization & encoding: Careful tokenization strategy matters (byte-level or subword tokenizers optimized for code + natural language + doc markup). Pre-tokenization normalization and binary attachments (images/audio) are turned into embeddings via modality-specific front-ends.

- Attention & memory: To manage 1M tokens efficiently, implementations typically mix dense attention with memory/compressed representations, sparse patterns, or blockwise computation to keep compute tractable.

- Structured outputs: Built-in schema/function-calling support means models can be asked to produce machine-parseable JSON or other constrained forms that are safer and easier to validate.

Gemini 2.5 Pro — What Makes the ‘Pro’ Tier Truly Game-Changing

- Context Window: Significantly larger global attention budget versus smaller variants — this reduces the need for external retrieval for many tasks.

- Model capacity & decoder behavior: Higher parameterization and training regimes tuned for stepwise reasoning and code understanding.

- Operational features: Advanced inference modes (low-latency vs deep-reasoning), schema enforcement, finer-grained versioning and enterprise controls.

For engineers, the key takeaway is: you can simplify some pipelines (less retrieval glue) but must adopt new operational controls (monitoring, hallucination detectors, and schema validators) because higher context amplifies both capability and risk vectors.

Gemini 2.5 Pro — The Surprising Upgrades You Haven’t Heard About

- Practical 1M-token inputs: Earlier families used chunking + retrieval as default; Pro opens the possibility of global attention over extremely large sequences. This affects memory usage, batch sizing, and latency profiles.

- Improved code understanding: Training data and fine-tuning for multi-file code bases, AST-aware losses, and longer code context permit cross-file refactors and end-to-end code reasoning (the model can see multiple files at once rather than operate file-by-file).

- “Deep Think” / stepwise reasoning: Explicit instruction-following modes to produce multi-step rationales or chain-of-thought style decompositions; for production, prefer discrete schema outputs rather than free-form chain-of-thought unless auditable.

- Multimodal temporal alignment: Better fusion of video frames, audio segments, and transcripts into time-aware outputs (timestamped summaries, scene detection).

Availability You Must Know — Gemini 2.5 Pro Insider Tips

Gemini 2.5 Pro is primarily available through Google’s managed platforms (AI Studio, Vertex AI, Gemini app tiers) with staged regional rollout and gated enterprise access. From a production readiness view:

- Enterprise path: Vertex AI offers enterprise-grade logging, IAM, VPC egress controls, and audit trails that are essential for regulated workloads.

- Experimentation: AI Studio and app sandboxes are good for R&D; production should run through Vertex or a managed enterprise integration to ensure observability and compliance.

- Model revisions: Treat model version IDs as first-class artifacts — changes in revisions can alter behavior substantially; keep deterministic evaluation harnesses.

What You Must Know First — Gemini 2.5 Pro Limits & Strengths

- Input context window: ≈1,048,576 Tokens. This enables whole-document passes but you should still design for chunk overlap, anchors, and section-level summaries to bound working memory and reduce hallucination.

- Output cap: Deployment often enforces output caps (e.g., tens of thousands of tokens). If you need multi-hour transcripts or long-form books as outputs, design streaming or paginated output pipelines.

- Modalities: Text, images, audio, video, and PDFs as native modalities — ensure your ingestion pipeline normalizes timestamps, DPI/resolution, and audio sampling to match model front-end expectations.

- Developer tooling: Function-calling, schema outputs, batch APIs, and native file uploads. Use strict JSON schemas and programmatic validators to reduce downstream failure modes.

Practical Engineering Notes:

- Chunk & index even with 1M tokens: Use anchors, summaries, and semantic indices for robust retrieval and update.

- Schema enforcement: Require machine-parseable outputs and validate with type-checkers / JSON schema libraries.

- Instrumentation: Log inputs, sampled outputs, and key evaluation metrics (factuality, hallucination rate, latency).

What the Numbers Really Say — Gemini 2.5 Pro in Action

A practical Benchmarking Approach:

- Define workload-specific metrics: Precision, recall, F1 for extraction tasks; ROUGE / BLEU for summarization (but complement with human evaluation for coherence and factuality); hallucination rate (binary flag per generated fact), calibration (confidence vs correctness), and latency/cost curves.

- Dataset curation: Construct in-domain datasets that mirror production inputs (long-form documents, multi-file repos, or video+transcript pairs).

- A/B testing: Compare pro family variants against Flash / Flash-Lite and competitor models using identical tokenization and prompt scaffolds.

- Reproducible harness: freeze model revision, seed RNGs where applicable, and snapshot preprocessing steps.

Why Experts Are Impressed — Gemini 2.5 Pro at Its Best

- Large-document summarization & long-context Q&A: Preserving global context and cross-referencing entities across far-apart sections.

- Temporal multimodal understanding: Mapping audio/video frames to transcript text and generating timestamped structured outputs.

- Codebase reasoning: Cross-file dependency resolution, refactor planning, and generating diffs when provided multi-file snapshots.

Unexpected Limitations Revealed — Gemini 2.5 Pro Feedback

- Version drift / non-determinism: Behavior can shift between model releases; ensure regression suites accompany rollouts.

- Hallucinations & verbosity: Even with larger context, hallucinations persist; prefer constrained outputs + downstream validators.

- Cost & latency at scale: 1M token passes are compute heavy; evaluate Flash variants or hybrid pipelines for throughput.

What Google Won’t Fully Reveal — Gemini 2.5 Pro Safety & Risks

- Regulatory scrutiny: Large models with broad capability attract attention from lawmakers and civil-society actors; model provenance, safety testing, and disclosure are critical.

- High-risk application controls: For medicine, finance, legal domains — require red-team evaluations, third-party audits, and human-in-the-loop verification.

- Operational controls: Access controls, differential logging, PII redaction, and data retention policies are mandatory for compliance.

Actionable Steps for Teams

- Conduct independent safety & adversarial testing (red-team).

- Add human-in-the-loop gates and explicit audit trails for regulated outputs.

- Use schema outputs + deterministic post-validation to reduce hallucination propagation.

Powerful Tricks You Didn’t Know — Gemini 2.5 Pro Features Exposed

- Deep Think / enhanced reasoning modes: Produces stepwise decompositions; keep internal rationales auditable.

- Function calling / schema outputs: Force the model to emit JSON or typed structures; validate strictly.

- Vertex AI & AI Studio integration: For experiment tracking, model hosting, autoscaling, and enterprise monitoring.

Pro tip: lock outputs behind function-calling handlers and run automatic validators in the ingestion pipeline; convert outputs to typed protobufs if necessary for downstream services.

What It Really Costs — Gemini 2.5 Pro Pricing & Access Secrets

- Pro tier = premium costs: Expect higher cost per token and per inference compute.

- Flash / Flash-Lite: Operate as lower-cost, faster variants for throughput-oriented workloads; suitable for production where token window needs are smaller or where retrieval-augmented flows handle context.

- Cost planning: Model call budgeting should include worst-case 1M-token passes; implement token limits, streaming outputs, and pre-filtering to avoid runaway bills.

Unlock Its Full Potential — Gemini 2.5 Pro Tips & Tricks

Core patterns:

- Chunk & index even with 1M tokens:

- Chunk into semantically coherent blocks (e.g., chapters, functions, sections).

- Maintain overlapping anchors (e.g., 256-512 token overlap) to preserve local continuity.

- Store per-chunk embeddings in a vector DB (FAISS, Annoy, or managed vector index) to support targeted re-querying and provenance tracing.

- Use function-calling + schema enforcement:

- Define strict JSON schemas and test with random generation fuzzers.

- Use deterministic sampling (lower temperature) and post-validate.

- Prefer stepwise prompts for complex reasoning:

- Ask the model for an outline, then expand sections; this reduces monolithic hallucination risk.

- Instrument & monitor:

- Track hallucination rate vs cost, latency percentiles, token usage per request, and downstream business KPIs.

Practical & Surprising Wins — Gemini 2.5 Pro Real-World Use Cases

Use case Buckets

- Long-document workflows: Legal brief synthesis, research meta-analyses, book summarization.

- Multimodal product intelligence: Video walkthrough → timestamped how-to + code snippet extraction.

- Codebase operations: Migration plans, cross-file refactor generation, unit-test suggestions.

- Knowledge-base QA and support:Ingest entire doc corpora and answer with citations and provenance.

A Real POC You Can Try — Gemini 2.5 Pro Mini Case Study

Goal: Convert a 2-hour product webinar + transcript into: (a) timestamped summary, (b) 12-slide deck, (c) 10-question quiz.

Inputs:

- Transcript with timestamps (tokenized into sections)

- Slide images (frame captures)

- Speaker notes

How They Really Compare — Gemini 2.5 Pro vs GPT‑4.5 Showdown

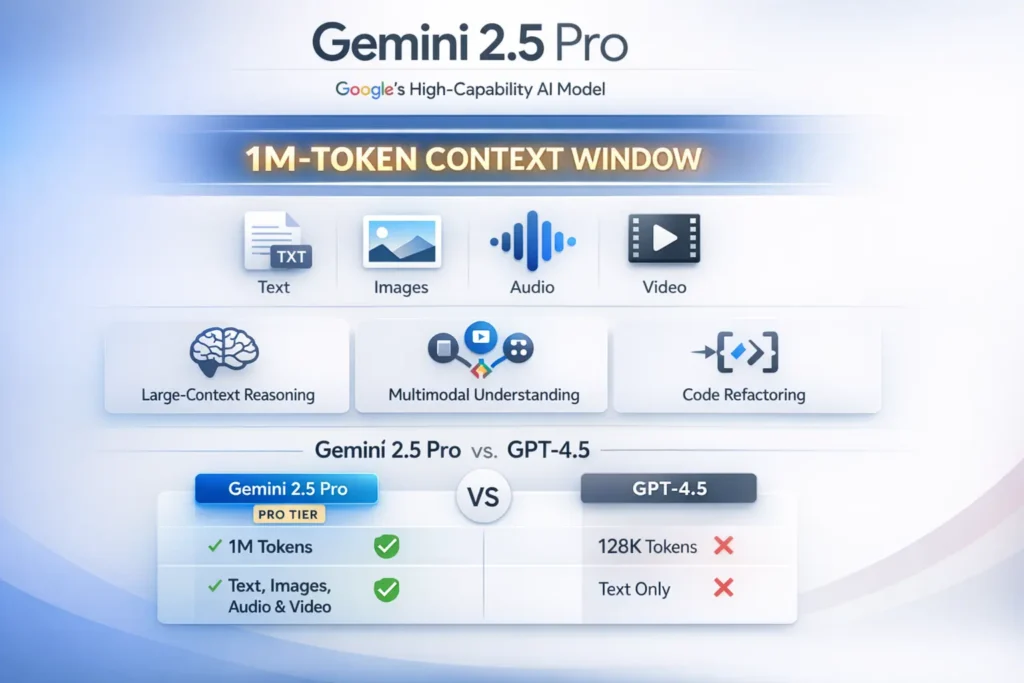

| Dimension | Gemini 2.5 Pro | GPT-4.5 (representative) |

| Large-context input | ~1M tokens (advantage Gemini Pro) | Historically smaller windows depending on offering |

| Multimodality | Native text/image/audio/video fusion | Strong, but vendor and offering dependent |

| Code performance | Tuned multi-file code reasoning | Competitive; OpenAI lineage strong on code |

| Enterprise integration | Vertex AI first-class | Azure / OpenAI and other cloud integrations |

| Safety/regulatory | Under public scrutiny; governance required | Also under scrutiny; depends on rollout policies |

Short verdict: Gemini 2.5 Pro provides structural advantages for workflows requiring holistic global context and native multimodal fusion; GPT-4.5 remains a strong alternative depending on cost, SLAs, and cloud integration preferences. Always benchmark on your specific workload.

Pros & Cons

Pros

- Enables extremely long-context tasks (1M tokens).

- Strong multimodal temporal alignment (video/audio/text).

- Tends to perform strongly on code and structured reasoning tasks.

- Tight enterprise integrations via Vertex AI.

Cons

- Regulatory and safety scrutiny — independent evaluation required.

- Cost & latency at the highest capacities; consider Flash variants.

- Model revisions can change behavior — continuous evaluation needed.

FAQs

A: Official configurations support roughly 1,048,576 input tokens and output caps like ~65,535 tokens in some deployments; check your Vertex AI/AI Studio config.

A: Through Google AI Studio, the Gemini app (advanced tiers), and Vertex AI for enterprise deployments. Availability may vary by region/account.

A: Treat it like any frontier model: add human oversight, red-teaming, data governance, and independent safety evaluations before using it in regulated or sensitive domains.

A: Use Flash / Flash-Lite when you need lower-cost, higher-throughput operations and can accept smaller context windows or slightly reduced capability. Good for scaling production workloads.

Final verdict

Gemini 2.5 Pro represents a step-change for engineering teams whose core product problems are dominated by scale of context and multimodal fusion. For NLP systems, the 1M-Token window simplifies many retrieval-heavy patterns — you can prototype simpler, more interpretable pipelines by moving some of the retrieval logic into in-model global attention. That said, power increases exposure: hallucination risk, version drift, and compute costs grow with scale. My recommendation: run a focused POC on one high-value workflow (long-document summarization, webinar → slides, or cross-file code refactor) using Vertex AI, instrument thoroughly, and implement human-in-the-loop validation gates. Emphasize schema-enforced outputs and automated validators to reduce downstream brittleness, and publish your evaluation data and metrics (when possible) to support community benchmarks and reproducible decision-making.