Introduction

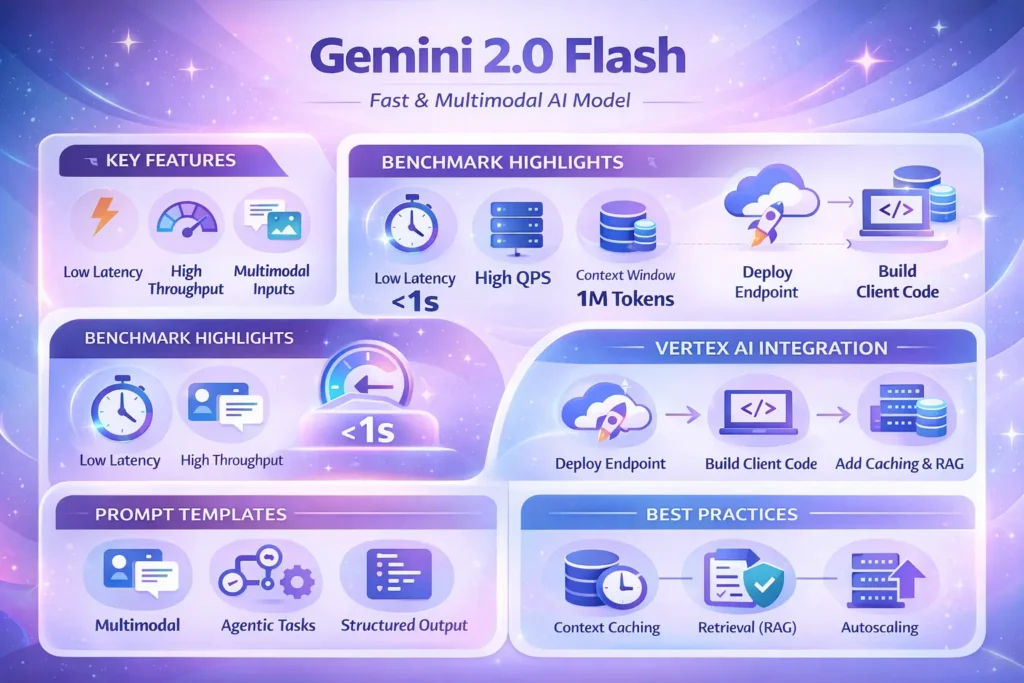

Use Gemini 2.0 Flash is a high-throughput, low-latency multimodal model in the Gemini 2.0 family, engineered to be both practical for production and friendly for NLP-centric evaluation. From an NLP perspective, Flash positions itself as the engineering-cost/latency-optimized tier that preserves robust multimodal understanding: it offers image-aware input handling, structured output options, and tool/function-call integration useful for agents. For researchers and engineers, the key considerations are familiar: tokenization behavior, context-window management, calibration of model confidence, hallucination characteristics, and how the model behaves in distributional shifts.

This guide reframes the vendor messaging in operational NLP terms: we translate release notes and marketing into evaluation metrics, testable benchmark methodology, Vertex AI integration patterns, prompt templates tuned for reproducible outputs, and production engineering playbooks that reduce hallucinations and keep costs bounded. Where the technical lifecycle matters (model id, GA dates, context capacity), treat vendor pages as primary sources — pin the model id in CI and verify in Vertex before issuing a production deployment.

Gemini 2 .0 Flash Quick verdict

What it is: A latency- and throughput-optimized Gemini 2.0 variant built for interactive multimodal & agentic workflows.

Common model id: Gemini-2.0-flash-001 (verify in Vertex AI model registry before production).

Why it matters (NLP view): Balances response time, multimodal fusion, and cost while providing structured output primitives that simplify downstream parsing and validation.

Primary use-cases: Image-aware chat, interactive assistants, function-calling agents, and high-QPS customer-facing services where token costs matter.

When not to use it: Tasks requiring the absolute top-of-class chain-of-thought depth or the highest synthetic-reasoning benchmarks — choose Pro-tier for those.

What is Gemini 2.0 Flash?

From an NLP and production-ML view, Gemini 2.0 Flash is an inference-optimized variant that explicitly trades some peak compute for reduced latency and improved cost-per-inference, while keeping multimodal and structured-output capabilities. Important facets:

- Model family & objective: Flash shares architecture lineage with Gemini 2.0 family members; weights and training regimes prioritize fast inference kernels and low tail-latency behaviors.

- Tokenization and context-window: Flash supports the family’s long-context architecture, enabling extended context usage (vendor materials mention large windows up to 1M tokens for certain variants). Tokenizer behavior (subword segmentation/byte-pair-encoding) and prompt tokenization choices impact both cost and retrieval effectiveness.

- Multimodality: Natively accepts text and image inputs; multimodal fusion layers produce embeddings combined with text tokens at inference.

- Tool integration: Designed to participate in agentic flows — structured outputs and function call primitives reduce parsing errors in downstream handlers.

Significance: Flash is optimized for productionized conversational pipelines where latency, throughput, and predictable behavior under concurrency are the constraints. If is not marketed as the ultimate model for the deepest chain-of-thought reasoning but is engineered to be robust in real-world retrieval, grounding, and tool-invocation scenarios.

Who should use Gemini 2.0 Flash?

Use Flash when one or more of these hold:

- Latency-sensitive UX: Your product expects sub-second or low-second reply latencies; tail latency (p95/p99) is a primary KPI.

- Throughput constraints: High QPS where per-request cost must be controlled.

- Multimodal needs: Workloads which combine images and text or require lightweight image understanding (e.g., image Q&A, product photo triage).

- Agentic tool use: You rely on function calls or structured JSON outputs that are parsed programmatically.

- Long context, bounded cost: You want to use long-context features but need cost-control patterns (rolling windows, RAG + caching).

Prefer Pro-Tier when:

- You need the highest benchmark scores on reasoning, math, or code synthesis.

- You require stronger chain-of-thought performance and are willing to pay higher inference costs.

Prefer Flash-Lite when:

- Massive text-only traffic requires the lowest per-query cost with acceptable quality trades.

NLP signals to test: perplexity on your domain, calibration (confidence vs correctness), F1/EM on retrieval-grounded tasks, hallucination frequency (false positives per 1k responses), and latency percentiles under production concurrency.

How Flash fits into the Gemini 2.0 Flash family

| Model | Best fit | Latency | Strength | Weakness |

| Gemini 2.0 Flash | Interactive multimodal | Low–medium | Fast, multimodal, tool support, large context | Not top for deepest chain-of-thought |

| Gemini 2.0 Pro | Complex reasoning & code | Higher | Best reasoning & code generation | Higher cost & latency |

| Gemini 2.0 Flash-Lite | Ultra-high throughput text | Lowest | Cheapest per text query | Reduced multimodal capability |

Note: latency & throughput depend heavily on endpoint sizing, region, concurrency, and batching. In NLP terms: measure tail-latency under your QPS and input token distributions.

Release Timeline & Lifecycle Notes Gemini 2.0 Flash

Treat vendor release notes and the model registry as the source of truth. Common operational patterns:

- Pin the exact model id in CI/CD.

- Track vendor changelog for “Thinking Mode”, API changes, and deprecations.

- Vendor GA notes in early Feb 2025 (public messaging) are useful context, but confirm the exact model id/version string in Vertex before deployment.

- Backward compatibility: Some features may be opt-in previews — avoid auto-upgrading.

- Deprecation windows: Build migration steps in your IaC (Infrastructure as Code) and release playbooks to rotate pinned models.

Key Capabilities Engineers and Product Teams Care About

- Multimodal inputs & outputs: Useful for document understanding, image captioning, visual question answering, and cross-modal retrieval. Evaluate with multimodal benchmarks and your own labeled data.

- Tool/function call support: Return structured JSON or call APIs directly. Use explicit schemas to validate outputs.

- Large context windows: When used, monitor token inflation and downstream retrieval latency. Long-context capability enables multi-document summarization, chaining, and context-aware dialogue state.

- Latency & throughput optimization: Flash is tuned for responsiveness at scale — but endpoint sizing and concurrency controls matter; measure tail latency and p99.

- Calibration & confidence scores: For production pipelines, use confidence/calibration outputs to gate automated actions.

- Security & compliance: Vertex features (CMEK, VPC-SC) help with data residency and regulatory requirements.

Testing Checklist:

- Tokenization mismatch checks (is your tokenizer the same as the vendor’s?)

- Synthetic adversarial prompts to measure hallucination or instruction-following deviations

- Calibration plots (confidence vs accuracy bins)

- Perplexity & token-usage analysis for cost estimates

Benchmarks: what public Data Tells you

Public aggregators present directional signals, but they can be misleading for your domain. For NLP professionals:

Key Evaluative Dimensions:

- By-token/perplexity: control generalization metric for language modeling.

- Task-unique metrics: Red, BLEU, METEOR for generation tasks; Exact Match (EM) and F1 for QA; BERTScore and embedding-similarity for well-formed equivalence.

- Personal evaluation: Use graded evaluations against real users for fluency, factuality, and usefulness.

- Calibration & hallucination rates: Operationally critical—track hallucinations per 1,000 responses and negative downstream incidents.

- Latency percentiles: p50/p95/p99 measures at target concurrency.

How to Benchmark Properly :

- Build a representative prompt suite (50–200 prompts) covering edge cases, adversarial, and nominal usage.

- Run both single-shot and few-shot tests. Decided with personal graders and/or labeled datasets using a task-appropriate poem.

- Act load testing at expected concurrency to measure tail latency and throughput. Observe stability under backpressure.

- Measure cost per useful response (token fees + endpoint runtime).

- Track degradation modes and failure cases; feed them into a feedback loop for prompt or retrieval adjustments.

Common Traps:

- Over-reliance on aggregated leaderboard scores.

- Not testing with true production input distribution.

- Ignoring multimodal evaluation complexities (image quality variability, OCR noise).

Gemini 2.0 Flash Pricing & Cost-Efficiency

- Per-million-token input/output charges (text tokens).

- Modality-specific fees (images, long-context charge tiers).

- Endpoint node/hour charges on Vertex AI.

Safe Estimator :

Estimated monthly cost =

(Avg_input_tokens × input_rate + Avg_output_tokens × output_rate) × monthly_requests × token_cost

- Endpoint_node_hour_costs

- Retrieval/storage costs

- Additional grounding/search costs.

Practical Tips:

- Stage load tests with realistic prompts to measure token footprints and real traffic characteristics.

- Cache repeated context and roll up histories with summarization to reduce token usage.

- Use Flash-Lite for massive text-only traffic; use Flash for multimodal plus speed; use Pro for complex reasoning where accuracy ROI justifies cost.

- Monitor token usage by user cohort to optimize caching/summarization policies.

Cost Levers:

- Truncate history intelligently using embeddings to prioritize semantically relevant context.

- Precompute RAG retrieval vectors and cache top-K passages.

- Use temperature-zero and schema constraints to reduce output token lengths when deterministic outputs are desired.

Integrate Gemini 2.0 Flash with Vertex AI

Prerequisites

- GCP project with Vertex AI + Generative AI APIs enabled.

- Service account with Vertex AI permissions.

- Billing & budget alerts configured.

- CI/CD pipeline with pinned model id.

High-Level Flow

- Choose model id: gemini-2.0-flash-001 (verify).

- Create & deploy a Vertex AI endpoint (size nodes by expected concurrency).

- Build client code: REST/gRPC/SDK for inference.

- Add caching, retrieval augmentation (RAG), and safety filters.

- Test under representative load and evaluate latency percentiles.

Validation & Schema Enforcement

- Use the single schema returned by the model to confirm programmatically.

- If the model replies with invalid JSON or missing keys, reject and re-prompt with a stricter schema or fallback to personal-in-the-loop.

Engineering Patterns & Best Practices

Context caching

- Keep canonical histories server-side; send summarized rolling windows to control token costs.

- Use embeddings + clustering to decide which context slices to include.

Retrieval-Augmented Generation

- Retrieve top-K documents, include passages in the prompt for grounding.

- Use passage-level citations to tag model outputs and enable traceability.

Tool Calls & Structured Outputs

- Prefer JSON schema outputs and require the model to return {“status”: “OK”, “payload”: {…}} patterns.

- Use structured function calls where possible to reduce post-parsing errors.

Pre & Post Validation

- Pre-validate prompts to avoid data leaks or PII exposure.

- Post-validate model outputs against business rules and knowledge bases; flag uncertain outputs for human review.

Autoscaling & Rate Limiting

- Load-test and size endpoints for peak concurrency.

- Prefer horizontal scaling; ensure idempotency and retries are implemented.

Telemetry & Observability

- Instrument latency (p50/p95/p99), failure rates, hallucination incidents, and token usage per request.

- Add request IDs and distributed tracing for root-cause analysis.

Security & Governance

- Use CMEK, VPC Service Controls, and IAM least-privilege patterns.

- Maintain audit logs and enforce key rotation.

Production checklist

- Pin the exact model id & release date in CI/CD.

- Run a 7–14 day staging test with representative prompts.

- Implement RAG + caching to control tokens and reduce hallucinations.

- Configure CMEK, VPC-SC, and IAM controls for regulated data.

- count telemetry: kind score, hallucination rate, latency, and cost.

- Add pullout logic and human-in-the-loop for high-risk decisions.

- plan clear runbooks for model rollback, tan deploys, and hotfixes.

- If you have a retraining/recalibration cadence and a heap detection pipeline.

Short Comparison Table

| Model | Best fit | Typical latency | Strength | Weakness |

| Gemini 2.0 Flash | Interactive multimodal | Low–medium | Fast, tool support, long context | Not best for deep chain-of-thought |

| Gemini 2.0 Pro | Complex reasoning | Higher | Best reasoning & coding | Higher cost |

| Gemini 2.0 Flash-Lite | High-throughput text | Lowest | Cheap per text query | Lower multimodal power |

| Typical competitor (e.g., GPT-4o variants) | Varied | Medium–high | Strong reasoning on some tasks | Pricing and tooling differences |

Risks, Limitations & Responsible Use

- Hallucination risk: Use grounding & RAG to reduce false facts; measure rates and create protective fallbacks.

- Data residency & compliance: Use Vertex features (CMEK & VPC-SC) for regulated data.

- Lifecycle churn: Pin versions and include deprecation handling in release playbooks.

- Token cost & explosion: Long contexts increase cost; summarized rolling windows help.

- prejudgment & fairness: Check out the model on fairness datasets and measure disparate impacts for shopper cohorts.

Pros & Cons Gemini 2.0 Flash

Pros

- Flat-latency and high-throughput for interactive apps.

- Native multimodal and tool/function-call support.

- Very large context windows for long workflows (when applicable).

Cons

- Not always a top performer for the deepest reasoning tasks.

- Pricing & runtime complexity require careful TCO measurement.

- Lifecycle and model updates require active CI checks.

FAQs Gemini 2.0 Flash

A: “Better” depends on the task. Flash often gives superior throughput and strong multimodal features; GPT-4o variants may be stronger at certain narrow reasoning tasks. Always benchmark on your prompts.

A: Vendor listings commonly show gemini-2.0-flash-001 as the Flash GA id — confirm in the Vertex model registry before you deploy.

A: Certain Gemini 2.0 variants are announced with up to 1M token context windows. Use long context carefully and measure token costs.

A: Thinking Mode is a test-time option in the Gemini changelog that lets the model spend extra compute on deeper reasoning steps — it’s available as preview/opt-in in some releases.

A: Use RAG, include source passages in prompts, validate outputs against your knowledge base, and prefer schema-based outputs.

Conclusion Gemini 2.0 Flash

Gemini 2.0 Flash is a practical option for production systems that require a calibrated combination of multimodality, speed, and cost efficiency. As an NLP engineer or product owner, treat Flash as an engineering tradeoff: it optimizes latency and throughput without abandoning multimodal and tool call capabilities. Your operational next steps should be clear: (1) run a small reproducible benchmark suite on representative prompts to validate quality, latency, and hallucination frequency; (2) estimate TCO conservatively using measured token distribution and endpoint node usage; (3) implement RAG + schema outputs and post-validation to minimize hallucinations; (4) pin model versions in your CI and prepare rollback procedures. If you’d like, I can generate a benchmark suite, a cost-model CSV for your projected QPS, or an exportable Vertex AI endpoint config + IaC tailored to your domain — tell me which and I’ll create it now.