Introduction

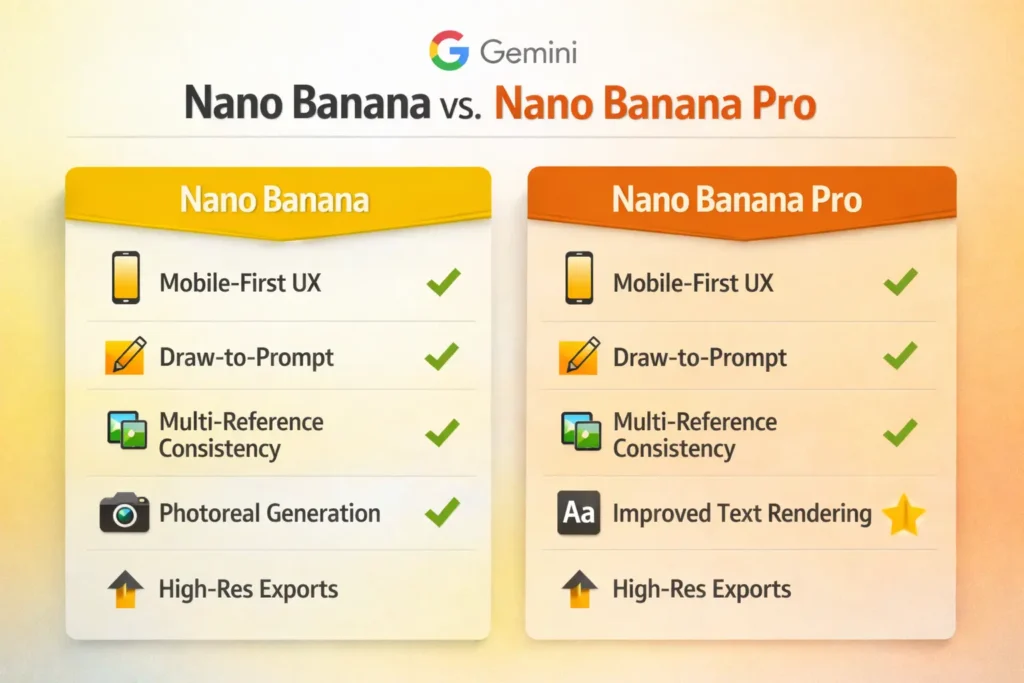

Discover Nano Banana, Google’s mobile-first AI for photoreal images. From collectible figurines to studio-ready product shots, create fast, consistent edits that engage audiences and drive sales — all with simple, actionable workflows.Nano Banana (the colloquial name for Gemini 2.5 Flash Image) is a multimodal vision model family optimized for rapid, high-quality image editing and generation, with a mobile-first experience. Framing it in natural language processing (NLP) terms: think of Nano Banana as a conditional sequence transduction model where the input sequence can be tokenized visual patches + textual tokens, a localized attention mask serves as a positional conditioning signal (draw-to-prompt), and multi-reference inputs are aggregated as reference-conditioned context vectors to preserve identity across outputs. The “Pro” tier is effectively a higher-capacity decoder with improved layout-aware decoding (better text rendering), higher-resolution output heads, and stricter provenance signals (C2PA / SynthID) for model attribution.

This guide is publisher-ready and written with both practitioners and content creators in mind. It includes plain-language explanations mapped to concepts (embeddings, attention, conditioning), practical, step-by-step generation and edit pipelines, a 30+ copyable prompt pack, a competitor comparison matrix, monetization strategies, ethical/legal checkpoints, troubleshooting heuristics, and a short FAQ preserved verbatim. Wherever helpful, the guide preserves actionable prompts and workflows so readers can run them in Gemini (or analogous systems) and get reproducible results.

Nano Banana — What Makes Google’s AI Go Viral in 2026?

In-speak, Nano Banana is a multimodal conditional generative model specialized for image outputs. Instead of only handling text token sequences, it ingests pixel-derived patch tokens, auxiliary text tokens, and optional reference encodings. The system operates like a sequence-to-sequence transformer where:

- Encoder stage: Reference images and prompt text are transformed into embeddings (continuous latent vectors). Multiple references are pooled into a multi-reference context embedding that informs the decoder.

- Conditioning masks: The draw-to-prompt mask is an explicit alignment signal that restricts the model’s output distribution to modifications in selected tokens — analogous to mask-filling tasks in language models (e.g., fill-in-the-blank).

- Decoder stage: A generative visual decoder synthesizes pixels or latent features into final images, with sampling controls (guidance scale / temperature-like hyperparameters) to balance fidelity vs. creativity.

- Post-processing: Image-level layout modules and text-rendering decoders improve the readability of textual elements in outputs (a feature stepped up in Pro).

Key user-facing capabilities are framed as familiarP constructs: “prompt engineering” becomes conditioning sequence engineering; “multi-reference consistency” is contextual embedding alignment across multiple conditioning sequences; “draw-to-prompt” is localized conditional token masking with a short instruction sequence.

Nano Banana — Why Creators Are Obsessed With Its Viral Magic

Three core ingredients created the viral effect — expressible in terms:

- Novel conditioning interface (draw-to-prompt) — This UX is like a simple, intuitive prompt template combined with a mask token: users point to tokens (pixels) they want changed and provide a compact instruction token sequence. Low friction for meaningful edits equals high user adoption.

- High sample quality (good likelihood and perceptual fidelity) — The model’s generated samples score high on human perceptual quality metrics, similar to an LLM producing fluent, coherent paragraphs. High perceived quality reduces post-editing friction and increases shareability.

- Distribution & integration (platform amplification) — Being integrated into Gemini, Search, Lens, and creative tools lowered access cost and increased trial frequency, analogous to an LLM being bundled into widely used productivity apps.

Together, these qualities yield a network effect: shareable stylings (the figurine trend) + a large installed base = rapid viral spread.

Nano Banana — What Makes This AI a Must-Have for Creators

- Photoreal generation & edits — High-fidelity decoding with texture- and lighting-aware rendering (analogous to an LLM producing coherent, contextually appropriate long-form text).

- Draw-to-prompt editing — Localized mask conditioning akin to token-level inpainting in text models.

- Multi-reference consistency — Cross-example embedding alignment that maintains identity features across sampled outputs.

- Improved text rendering (Pro) — Layout-aware decoding and a specialized module for glyph fidelity; think of a language model with a dedicated typography head to ensure phrase-level accuracy.

- Device/resolution options — Output heads configured for different sampling/upsampling paths (mobile-friendly downsampled decodes vs. Pro high-res decoders).

- Pro integrations — APIs and plugin connectors that let enterprise workflows call the model as a service for large batches.

Nano Banana — How This AI Actually Creates Viral Edits

Model family and parameterization

Nano Banana sits in the Gemini image family: an encoder-decoder or decoder-only transformer backbone adapted for vision tokens. Tokenization maps image patches and text tokens into a shared embedding space. Pro variants increase decoder capacity and add specialized heads (text-layout head, high-res upsampler).

Reference blending = embedding fusion

Multiple reference images are encoded into a set of embeddings, which are fused (concatenation + cross-attention pooling or learned aggregator). This fused context is appended to the decoder’s context window so that generated outputs preserve identity cues.

Draw-to-prompt UI = mask + instruction tokens

When a user draws, the application converts that region into a binary mask token sequence aligned to patch tokens. The typed instruction becomes a short token sequence that conditions decoder attention to alter only masked tokens, leaving the rest unchanged — conceptually identical to masked language modeling (MLM) but in the visual domain.

Guidance & sampling

Sampling uses classifier-free guidance analogues: the model is conditioned on the instruction and optionally on the negative prompt; the guidance scale steers between unconditional samples (more diverse) and strongly-conditioned samples (more faithful).

Provenance & safety layers

Google integrates moderation classifiers and provenance metadata—some images include SynthID/C2PA signals embedded in metadata channels or invisible watermarks. This is analogous to adding provenance tokens or signed attestations to generated text.

Nano Banana — How to Create Stunning Edits in Minutes

Below are production-ready pipelines framed as NLP-style sequences. These steps assume usage through Gemini (mobile/web) or integrations (Photoshop Beta, Google AI Studio).

Nano Banana — Quick Start: Install, Access & Create Fast

- Install the Gemini app (iOS/Android) or open the Gemini web.

- Open Image → Model Selector → choose Nano Banana or Nano Banana Pro (Pro may require subscription).

- Upload reference images or start from a blank canvas.

- If editing, use the draw mask to highlight the target patch tokens; then type a short instruction.

- Adjust guidance scale/creativity sliders; sample variations; export chosen output.

Use Nano Banana — Transform Selfies Into Stunning 3D Collectibles

Inputs: High-res head-and-shoulders photo (single reference).

Conditioning: Mask around head/torso if you want localized sculpting.

Prompt (copy):

“Create a 1/7 scale photoreal collectible figurine of the subject in the photo. Glossy vinyl texture, studio lighting, neutral white backdrop with subtle vignette, posing on a round acrylic base. Add soft rim light and realistic cast shadow.”

Why this works: prompt tokens include scale, material, and lighting — clear conditioning signals that map to decoder style priors.

Nano Banana — Replace Product Photos Fast for E-Commerce

Inputs: single product photo or product reference images.

Prompt (copy):

“Generate a clean white-background product shot of the uploaded product. Natural shadow, true-to-color, consistent scale, allow copy space for SKU.”

Export: High-res PNG (sRGB). For print, convert and color-check in a design tool.

Localized edit with draw-to-prompt

Inputs: image to edit. Draw a mask around the jacket.

Prompt (copy):

“Replace jacket with a tailored navy blazer, add subtle pinstripe, keep lighting consistent, no background change.”

Note: Short, targeted conditioning sequences are more stable than long, compound prompts.

Multi-reference identity consistency

Inputs: 3–5 reference headshots.

Prompt (copy):

“Use these references to generate five marketing headshots with consistent facial features, three-quarter lighting, neutral background, and two sizes: hero and thumbnail.”

Export: pack for thumbnails and hero images. More references = tighter identity alignment.

Nano Banana — 30+ Copyable Prompts Ready to Use

Below are prompt tokens you can paste into Gemini. Keep them largely intact — they’re designed to act as short conditioning sequences that the model understands.

Starter — Figurine / Avatar (1–10)

- “Turn the uploaded photo into a glossy collectible 3D figurine, 1/7 scale, studio lighting, shallow depth of field, neutral background, photorealistic.”

- “Create a stylized vinyl figurine of this subject with bright color accents and matte finish, on a clear base, with soft rim light.”

- “Make a bust figurine portrait on a marble pedestal, cinematic warm key light, 85mm lens feel.”

- “Generate a mini-figure toy display with packaging to the right — include box art and logo area.”

- “Produce a collectible diorama scene: subject as explorer in a miniature jungle with realistic moss textures.”

(…add pose and finish variations to reach 10)

Advanced — Composite & Storytelling (19–30+)

19. “Blend the three reference images into a coherent scene, maintaining subject scale, realistic shadows, cinematic lighting, 35mm lens feel.”

20. “Generate a poster mockup with the exact headline text: ‘SUMMER SALE — 50% OFF’ and readable body copy at print resolution.” (Pro recommended.)

21. “Turn multiple headshots into a hero team photo with consistent lighting and natural overlaps; preserve facial identity.”

(…add more prompts for props, backgrounds, multilingual text rendering)

Pro tip: For precise text on labels, include a clause: “render text exactly as written: [INSERT TEXT]” and use Nano Banana Pro.

Nano Banana Pro — The Technical Upgrades Behind Studio-Ready Edits

Higher capacity decoder — Pro increases model capacity and includes specialized heads to better model glyphs and layout. Where the base model produces acceptable text, Pro is tuned to reduce character garbling by adding explicit layout supervision and higher-resolution decoding passes.

Higher-resolution outputs — Output heads may implement hierarchical decoding: coarse latent decode → intermediate upsampling → fine-grain super-resolution head.

Context-aware detail — Pro adds more robust scene priors and may rely on larger reference-context windows, making plausible additions that align with scene semantics (but sometimes introduces hallucinated details — treat these as generated priors that require human verification).

Provenance & metadata — Pro outputs may be more likely to include C2PA/SynthID metadata markers for provenance compliance, enabling traceability when publishing.

Nano Banana — How It Stacks Up Against Other AI Image Tools

| Feature / Tool | Nano Banana (Google) | Midjourney | OpenAI Images / ChatGPT | Adobe Firefly |

| Strength | Photoreal edits, multi-ref consistency, mobile UX | Artistic stylization, community styles | Conversational generation, LLM integration | Design workflow & enterprise features |

| Draw-to-prompt | Yes (excellent) | Limited | Experimental | Limited |

| Text rendering | Good (Pro: very good) | Weak | Improving | Good |

| Mobile UX | Yes (Gemini app) | Limited | Increasing | Mostly desktop |

| Best use-case | E-commerce, avatar/figurine trend | Concept art | Conversational creative flows | Brand systems, enterprise design |

Short take: Nano Banana excels at mobile-first, photoreal editing, and multi-image identity consistency. Midjourney dominates stylized art; Firefly is tailored to design/brand systems; OpenAI focuses on conversational creative workflows.

Pros & Cons

Pros

- Low-latency, mobile-first editing workflows.

- Strong subject consistency across edits (multi-reference embedding).

- Intuitive localized conditioning (draw-to-prompt).

- Pro tier improves textual glyph fidelity and output resolution.

Cons

- Moderation and provenance gaps remain — outputs can mislead if provenance metadata is ignored.

- Pro features may be behind paywalls or quotas.

- For print-quality CMYK workflows, manual post-processing is often required.

Nano Banana — Pricing, Availability & What You Get

Pricing changes frequently. Use a small table with links to official Google pages in a live article and update monthly.

| Tier | Typical access | Best for |

| Free / Trial | Basic Nano Banana features, low-res exports, and daily limits | Casual users/experimentation |

| Gemini Pro / Paid tier | Nano Banana Pro access, higher resolution, more edits | Creators, freelancers |

| Enterprise / API | Volume usage, integrations, priority | Agencies, e-commerce platforms |

Publisher tip: Link to Google’s official pages for the latest pricing and subscription details.

Nano Banana — Real-World Workflows & Monetization Playbook

Nano Banana creates numerous monetizable opportunities. Treat each offering as a conditioned service pipeline: reference collection → prompt engineering → batch sampling (with consistent seeds) → QA and packaging.

- Productized avatar/figurine packs — Offer fixed-scope packages (e.g., 10 figurine images + 1 box mockup). Price per pack and delivered with simple licensing terms.

- E-commerce retainer — Monthly deliverables for catalogs: set style prompt, reference template, batch API processing. Save clients’ studio costs.

- Prompt packs / micro-courses — Sell curated prompt libraries with use examples and before/after galleries.

- Viral social campaigns — Turn creator selfies into collectible figurines or micro-stories for shareable campaigns.

- Design-to-print services — Use Pro for mockups, then finalize artwork for print in a design application.

How to sell: capture emails with a free 30-prompt PDF, publish before/after case studies with conversion metrics, and show time saved as ROI.

Nano Banana — Legal & Ethical Guidelines Every Creator Must Follow

Use these as policy preconditions before commercializing generated images:

- Likeness & releases: For commercial use of a person’s image, secure explicit model releases. This is similar to securing permission to reproduce private text or urban legends.

- Copyright & trademark: Avoid generating close copies of copyrighted logos or protected artwork. Treat brand logos as IP-sensitive tokens.

- Deepfake risk: Extremely realistic edits of public figures may be deceptive. Add provenance metadata and include disclaimers.

- Platform rules: E-commerce platforms have dynamic policies on AI images. Always review current rules for product listings and marketing.

- Provenance & transparency: Embed C2PA/SynthID metadata and add an “AI-generated” disclosure when publishing.

Publisher policy suggestion: Add a short “AI used” disclosure on product pages; preserve originals; state the generation method, and include basic provenance info.

Nano Banana — Troubleshooting & Best Practices for Flawless Edits

Problem: Artifacting or odd textures.

Fix: Increase source resolution, reduce sample randomness (lower temperature/guidance variance), use a second pass with “realistic texture” tokens.

Problem: Garbled or illegible text on labels.

Fix: Use Nano Banana Pro, include an explicit clause “render text exactly as written: [TEXT]”; if still noisy, overlay the final text via design software.

Problem: Subject mismatch across images.

Fix: Upload more high-quality references, instruct the model to “maintain nose shape and eye color,”, use consistent seeds for deterministic sampling where possible.

Problem: Edit not applied where expected.

Fix: Use the draw-to-prompt mask to precisely target patch tokens; keep instruction compact and explicit.

Best practice: iterate with small, targeted edits, sample multiple variations, prefer short conditioning sequences, and lock unmodified regions with strong masks.

Nano Banana — Real-World Case Studies That Show Results

E-commerce case study: “Cut photography costs by 60% — generated 300 product images in 24 hours.” Include before/after visuals, time logs, and conversion metrics.

Influencer campaign: “Figurine avatar campaign — +25K followers in 10 days.” Document campaign assets and share the prompt and sampling procedure.

Real estate staging: “Virtual staging with Nano Banana Pro reduced staging time by 80% for 20 listings.” Provide a compliance disclaimer.

FAQs

A: Google usually offers basic Nano Banana features for free in the Gemini app, but Nano Banana Pro and higher-resolution exports may need a paid tier or be limited by daily quotas. Always check Google’s official pages for the latest access rules.

A: Often yes, but follow Google’s licensing and platform policies. When using people’s faces, always get a signed model release. For complex legal questions, consult an attorney.

A: The model sometimes adds plausible context using its world knowledge (e.g., era-accurate objects). This can be useful but requires fact-checking before publishing.

A: Standardize your reference images, use the same style prompt and camera settings, upload consistent references for each SKU, and consider an API or enterprise plan for batch processing.

Conclusion

Nano Banana recasts image editing as a conditioned generative sequence problem: reference embeddings + mask-based conditioning + compact instruction tokens produce rapid, repeatable visual outputs. For creators and businesses, the model’s speed, mobile-first UX, and multi-reference consistency unlock productized creative services and rapid A/B experimentation. Nano Banana Pro extends the model’s utility into production: improved glyph rendering, higher-fidelity decodes, and metadata for provenance. Yet with power comes responsibility — always disclose AI use, secure releases for likenesses, and apply provenance tools. If you publish this pillar, lead with the 30+ prompt pack, show before/after galleries, include concrete ROI case studies, and maintain a monthly update cadence. That mix — technical clarity, practical prompts, monetization paths, and ethical guardrails — is what readers, search engines, and decision-makers will value.