Introductio

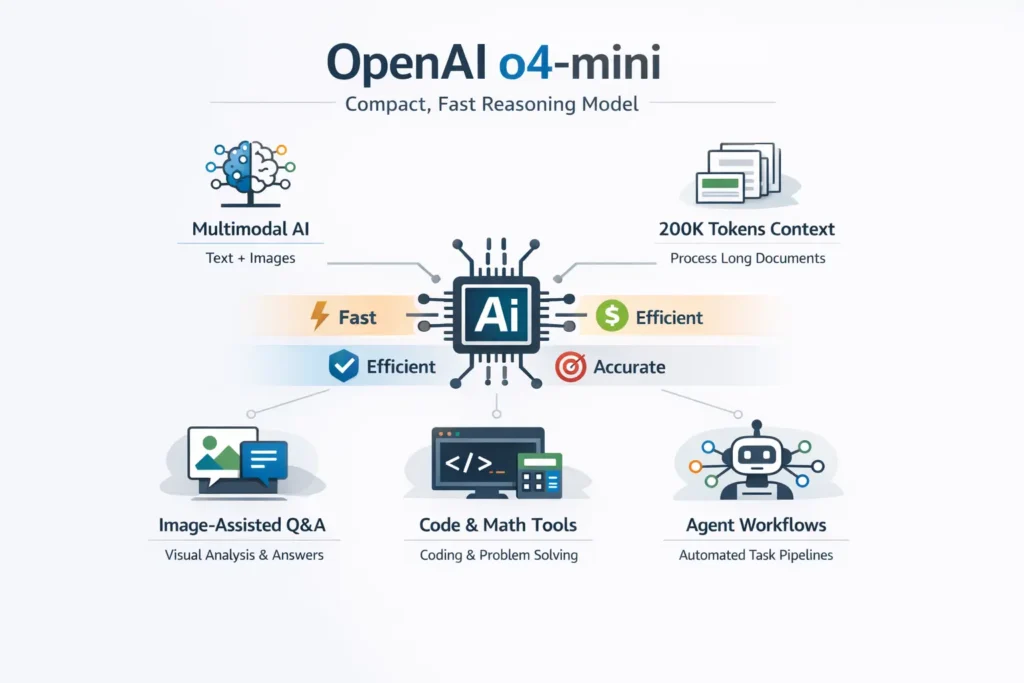

OpenAI o4-Mini confusing you with specs and benchmarks? This guide shows how it boosts reasoning speed, multimodal power, and real results. Explore 7 key insights to get started quickly.o4-mini is OpenAI’s compact, low-latency reasoning model in the o-series. It’s optimized for multimodal tasks (text + images), high throughput, and very large contexts. Use it for image-assisted Q&A, developer tooling, long-document analysis, and agent-style orchestration — but always ground outputs with retrieval, deterministic checks (validators/tools), and human review to reduce hallucinations.

Why OpenAI o4-mini Matters: Who Should Read This Guide

If you build developer products, visual ministry, or high-throughput services, you need one clear, practical reference that mixes official specs with real examples and production patterns. This guide is written to be accessible, but precise enough for engineers and product managers to run a targeted proof-of-concept (POC), evaluate tradeoffs, and plan a journey.

OpenAI o4-mini Quick Facts & Official Specs You Need to Know

- Model family: OpenAI o-series — o4-mini.

- Primary use cases: reasoning, coding helpers, math, multimodal tasks (images + text).

- Context window: Up to 200,000 tokens (official maximum).

- Max output tokens: Up to 100,000 tokens in one response (official maximum).

- Vision support: Yes — images are part of the reasoning pipeline; the model can reason about crops and regions.

- Positioning: Compact, lower-latency, cost-efficient reasoning model for production workloads.

Practical note: Official maxima are upper bounds. Your stack (SDKs, proxy servers, request timeouts) often imposes smaller effective limits — always test in your environment under load.

Release Timeline & Key Updates for OpenAI o4-mini You Should Know

- Announcement: O3 and O4-mini were publicly introduced in April 2025.

- Key capability announced: “Thinking with images” — models can include images in chain-of-thought computations (crop, zoom, rotate, region focus).

- Operational caution: Model snapshots are not immutable. There have been release adjustments and at least one rollback event tied to content flags. Build monitoring and rollback plans into your deployment strategy.

What OpenAI o4-mini Excels At: Key Strengths & Use Cases

Short answer: Fast, cheaper reasoning with image understanding and large-context support.

- High throughput & low latency: Designed for many concurrent calls per second (good for triage bots, streaming UIs, and server endpoints).

- Multimodal reasoning: Useful for whiteboard Q&A, invoice/diagram analysis, design review, and screenshots because the model can incorporate images into its internal reasoning.

- Large-document analysis: The very large context window makes it possible to analyze long manuals, big contracts, or multi-file bundles without heavy chunking — though cost and latency must be considered.

In practice, combine o4-Mini with deterministic tooling (e.g., Python runtimes, calculators, OCR) when accuracy matters.

How to Read OpenAI o4-mini Benchmarks: Tips for Accurate Evaluation

Benchmarks are helpful comparisons, but never a substitute for domain testing.

- Benchmarks = upper bound, not guarantee. They show capability on controlled datasets, not reliability on your proprietary data.

- Measure real metrics: latency, hallucination rate (factually incorrect outputs), cost per useful response, and throughput under production load.

- Tool-enabled runs matter: Many failures disappear when you allow the model to call deterministic tools (a calculator, a script, a database). If your workflow benefits from external validation, benchmark in that configuration.

Rule of thumb: Design a benchmark corpus from your real user data and run side-by-side tests.

Pricing & deployment considerations: O4-mini is positioned as cost-efficient, but the true cost depends on how you use it.

- Token volume matters: Longer contexts + long outputs = higher cost.

- Call pattern matters: Many short calls vs. fewer long calls change billing and latency tradeoffs. Batching and caching are your friends.

- Tool usage adds cost: If you run external evaluations (image processing, Python runs), those add compute/time.

- A/B decisions: Use o4-mini where throughput and latency are primary; for rare, single-call tasks where max accuracy matters, you may prefer a larger model. Always A/B test.

Top Real-World Use Cases & Architectures for OpenAI o4-mini You Can Apply Today

Top use cases

- Image-assisted Q&A: Design reviews, diagrams, and screenshots.

- Developer tooling: Commit message generation, code triage, test suggestion.

- Agent workflows: Triage bots that call tools (lint/test/Python) and return structured outputs.

- Large-document analysis: Summarization, extraction, cross-referencing.

Architecture patterns

Pattern A — RAG + o4-mini (retrieval-augmented generation)

- Vector DB → retrieve top-k documents.

- Build a compact context with the retrieved passages.

- Call o4-mini with instructions to cite sources and show line numbers.

- Re-check outputs with a deterministic validator (regex, calculator, or code).

Pattern B — Tool-mediated orchestration

User input → orchestrator → run linters / tests / Python → o4-mini synthesizes results and returns structured actions. Use CI/sandbox to validate patches.

Pattern C — Image pre-processing

Image normalization → crop/deskew → OCR → pass both image and OCR text to o4-mini for extraction. Validate numeric fields with deterministic checks.

OpenAI o4-mini Migration Guide: Move from o3 / o3-mini Smoothly

Checklist

- Define evaluation corpus — collect the real prompts and documents you care about.

- Side-by-side A/B tests — measure latency, cost, accuracy, Hallucination rate.

- Tool & image testing — validate crop and zoom behavior; ensure OCR integration works.

- Safety checks — adversarial prompts and failure modes.

- Rollback plan — feature flags and throttling to revert quickly if behavior changes.

Quick rollout (90-day) plan

- Weeks 0–2: POC — 1k prompts, basic metrics.

- Weeks 3–4: A/B test vs incumbent; iterate prompts.

- Weeks 5–8: Integrate tools and logging.

- Weeks 9–12: Beta release with limited traffic; model-version monitoring.

- Week 13+: Full rollout and monthly re-evaluation.

OpenAI o4-mini Limitations, Safety, and Known Issues You Should Know

Main limits

- Hallucinations: Still possible; grounding is required.

- Context practicalities: The official 200k token window is large, but your production stack may limit it.

- Operational risk: Model updates or rollbacks can change behavior; monitor release notes and set alerts.

Safety suggestions

- Use retrieval plus citation demands.

- Add deterministic validators (regex, calculators, unit checks).

- Human-in-the-loop for high-risk decisions (medical, legal, financial).

- Log inputs, outputs, model version, and retrieval snapshots for provenance.

OpenAI o4-mini: Head-to-Head Quick Comparison with Other Models

- of 4-mini — compact, high-throughput reasoning; large context; multimodal.

- o3 — earlier reasoning model (different tradeoffs in latency/throughput).

- gpt-5-mini — vendor-specific next-gen mini; check the vendor docs for context and vision support.

Typical tradeoff: O4-mini offers lower latency and cost for many reasoning tasks; larger models may be better for rare, very high-accuracy needs.

Pros & Cons

Pros

- Compact and cost-efficient for production workloads.

- Multimodal — images are part of the reasoning.

- A very large context window enables long-document analysis.

Cons

- Hallucinations still occur — grounding required.

- Large token usage can be costly.

- Platform updates or rollbacks can change behavior; test after updates.

FAQs

Q1 Answer: Official docs list up to 200,000 tokens for the o4-mini context window, and long output support up to 100,000 tokens. Effective limits depend on your SDK, proxy, or platform timeouts — test them.

Q2 Answer: Yes. o4-mini supports image inputs and can reason about regions (crop/zoom). For best results, pre-process the image, provide OCR text where applicable, and instruct the model which area to inspect.

Q3 Answer: Not by itself. Use retrieval grounding, strong deterministic validators, and human reviewers for medical, legal, or financial decisions. Treat o4-mini as a decision-support tool, not an authoritative oracle.

Q4 Answer: Yes — model updates and occasional rollbacks happen. Maintain release-note monitoring and a rollback plan (feature flags, throttles).

Q5 Answer: OpenAI and independent reviewers report strong math and coding performance for o4-mini, especially with tool access (e.g., a Python interpreter). Use benchmarks as a guide; validate on your domain.

Conclusion

OpenAI o4-Mini is a practical reasoning model that balances strong problem-solving ability with low latency. Its image reasoning and large context window unlock product features like visual QA and long-document analysis. Benchmarks are only the start — measure hallucination rates on your domain, instrument provenance, and have an operational plan for model updates.