Introduction

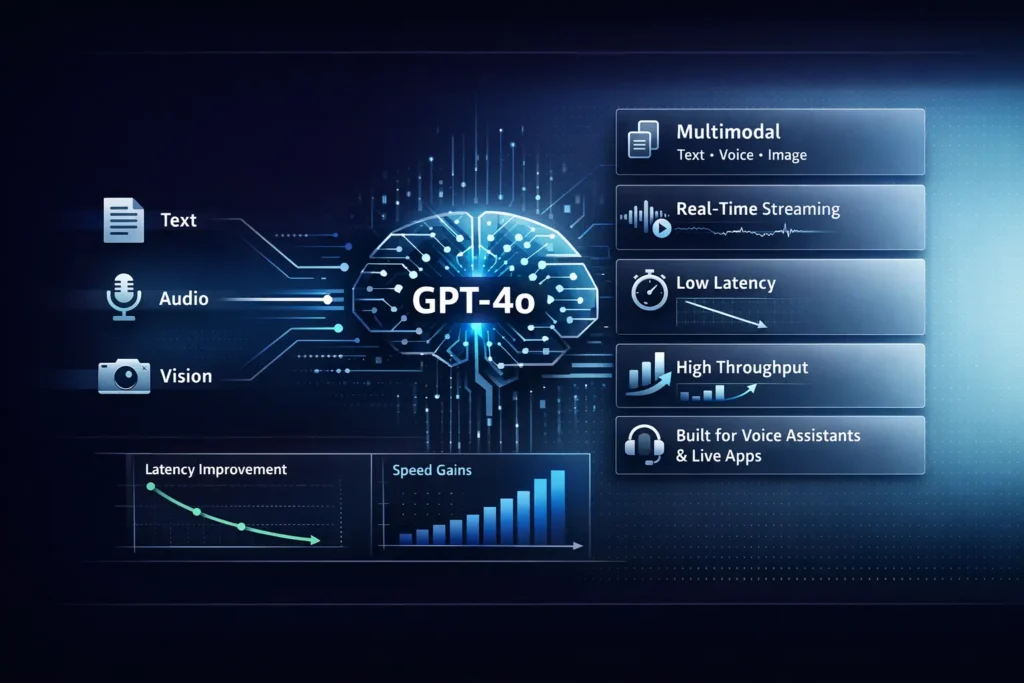

GPT-4o (GPT-4 Omni) means a step in practical multimodal systems design: a single inference endpoint that performs symbolic text, continuous audio waveforms, and raster visual tokens, ready coherent structured outputs in near-real time. Instead of building a pipeline that chains discrete ASR, visual encoders, and a core, GPT-4o lets engineers express multimodal intents in a linked representation and rely on a single transformer-like model to fuse modalities and emit contextual feedback. For applied NLP teams building live assistants, in-vehicle agents, or accessible narration tools, this reduces cross-model orchestration and potential format-translation error.

But model choice is still an empirical engineering decision. Latency characteristics, token throughput, output calibration, and alignment with domain semantics vary with prompt shape, session context, and production configuration. This guide reframes GPT-4o’s capabilities in NLP design terms, supplies repeatable benchmarking harnesses and evaluation metrics, outlines production patterns for streaming and multimodal flows, and offers an operations-first checklist to help practitioners choose, measure, and validate GPT-4o for their use cases.

Hidden Features of GPT-4o (GPT-4 Omni) You Didn’t Know

What it is: GPT-4o (GPT-4 Omni) is a multimodal generative model designed for live inputs and outputs, fusing text, audio, and images into a single visual pipeline for lower-latency coll applications.

Key strengths include reducing cross-model context replacement by performing early- and mid-level multimodal fusion, supporting incremental reading to improve perceived interest, and yielding higher tokens-per-second throughput for many casual and streaming tasks. This benefits dialogue systems, voice assistants, and vision and dialogue apps.

Main tradeoffs: For tasks requiring extremely deterministic multi-step symbolic reasoning or strict reproducibility, other variants and evaluation-specific ensembles may still outperform GPT-4o. Latency and behavior depend on prompt engineering, session context management, and whether you use streaming versus batch. Validate on your datasets.

Who should keep reading: NLP engineers prototyping live voice/camera assistants, product leads evaluating real-time options, and researchers needing reproducible benchmark methodology and safety guardrails.

How to use the guide: Follow features → reproducible benchmarks → decision matrix → API patterns → safety → checklist → publishable outputs.

Multimodal Inputs Made Simple: Images + Audio + Text

GPT-4o was designed as an “omni” model: a single multimodal transformer-style system capable of ingesting discrete tokens (text), continuous signals (audio), and structured visual token sequences (images), and producing coherent responses optimized for interactive latency. Below, we explain the key capabilities in /ML engineering terms and why they matter.

Native Multimodality: How GPT-4o Combines Text, Voice & Vision

From a systems context, GPT-4o embodies a cross-modal representation learning approach: audio waveforms are chpher into embeddings that the model aligns with text tokens, and image pixels are updated into visual tokens or embeddings that inhabit a shared latent space with text. This enables joint thinking across modalities: a single decoding pass can reference spoken content, visual cues, and new text context. The practical benefit is simplified architecture: fewer reading layers, less serialization/deserialization, and reduced total network round-trip.

Lightning-Fast Voice Reasoning That Feels Magical

GPT-4o supports incremental, partial decoding—partial outputs are emitted as soon as decoding begins—enabling low first-response latency. For streaming audio inputs, you can send audio chunks (frames) and get progressive hypotheses and refinements. That behavior is crucial for natural conversational UX: showing interim tokens reduces perceived latency and allows early UI affordances (hint suggestions, provisional actions). Architecturally, streaming requires careful turn-taking, silence detection, and robust restart/late-arrival handling.

Inside GPT-4o: How Low Latency Powers Real-Time AI

The model’s runtime optimizations (operation fusion, efficient batching for streaming scenarios, optimized attention kernels, etc.) result in lower time-to-first-token and higher tokens/sec throughput in many interactive workloads. In practice, this reduces pressure on client-side batching and simplifies UX, but exact gains depend strongly on prompt length, number of modalities, encoding overhead, and network topology.

Inside GPT-4o: Long-Term Memory Patterns Explained

GPT-4o supports large session contexts. In NLP production patterns, combine a short, rolling conversational buffer with an external vector store for long-term memory: retrieve, condense (or summarize), then inject compact context. Avoid naive feeding of huge histories—use retrieval-augmented generation (RAG) or dynamic context compression to control token usage and preserve latency.

Developer Ergonomics & Product Integration

OpenAI exposes streaming, function-calling primitives, and multimodal input formats. From an engineering standpoint, this means you can integrate GPT-4o into web/mobile/edge clients with streaming websockets, incremental chunking, and structured output schemas. Favor explicit schema prompts and lightweight parsing to avoid brittle post-processing.

Real Benchmarks: How Fast Is GPT-4o Really?

For production decisions, empirical benchmarking is non-negotiable. Below is a reproducible plan and a rationale for each test type, framed for NLP teams.

Why Benchmarks Matter

Marketing or single-run claims mask variability introduced by client-side audio encoding, file upload mechanics, network tails, and prompt structure. Benchmarks reveal cold vs warm cache behavior, concurrency thresholds where throughput degrades, and modalities that disproportionately increase latency. They also quantify tradeoffs between real-time responsiveness and absolute throughput.

Reproducible Testing Made Easy with GPT-4o

Environment control

- Pick a single cloud region and collocate your benchmark harness to reduce network jitter.

- Pin your SDK and API versions. Record package versions in the harness repo.

- Use consistent audio codecs and image sizes for all runs.

- Disable automatic retry/backoff in timing experiments to measure raw behavior.

Single-Turn Latency

- Send 1,000 sequential prompt requests (no concurrency).

- Measure:

- upload_ms (if sending image/audio),

- server_receive_ms (if available),

- time_to_first_token_ms,

- time_to_final_token_ms.

- Run cold (after a significant idle period) and warm (after 100 requests) tests.

- Report median, 95th, and max latencies.

Throughput Test

- Use concurrent workers: try 8, 16, 32, 64.

- Measure tokens/sec and responses/sec.

- Increase concurrency until error rate or tail latency rises; record the breakpoint.

Accuracy Tests

- Use domain-relevant datasets (MMLU-style or task-specific benchmarks).

- Report accuracy %, confidence calibration (e.g., expected calibration error).

- When evaluating multimodal tasks, use curated pairs (image+question, audio+prompt) and measure task success, e.g., extraction F1, grounding accuracy.

Multimodal Scenario

- For images: test with 2–5 MB images, varying resolution and compression.

- For audio: test with 5–30s clips at consistent bitrates.

- Log:

- client_encoding_ms,

- upload_ms,

- server_parse_ms,

- time_to_first_token_ms,

- final_token_ms.

Reproducibility

- Publish harness code (Node/Python), raw CSV logs, and an environment README (region, network type, SDK versions, exact prompts).

Example Findings

Independent analyses often observe substantially lower time-to-first-token and improved streaming throughput for GPT-4o in real-time conversational setups versus older GPT-4 variants. However, for some deterministic reasoning benchmarks, other tuned variants remain competitive. Use your domain tests.

How to publish Results

- Provide a clear table: Test_name | model | first_token_ms (median) | final_token_ms | tokens_per_sec | accuracy_pct | concurrency | notes.

- Share raw CSVs and the harness repo to maximize credibility.

GPT-4o vs GPT-4 Turbo: Speed, Cost, and Reality Check

Use this decision matrix to match application requirements to models.

Use case / requirement → Pick GPT-4o (Omni) → Pick GPT-4 / GPT-4 Turbo / other

- Real-time voice assistants, streaming chat → ✅ Best fit (low-latency + streaming) → ❌ Not ideal

- Multimodal demos (camera + mic + text) → ✅ Simpler pipeline; single endpoint → ❌ More complex to stitch together

- Heavy chain-of-thought / deterministic math → ⚠️ Benchmark — may be adequate → ✅ Prefer specialized reasoning-tuned variants

- Cost-sensitive offline batch jobs → ⚠️ Evaluate cost/throughput tradeoffs → ✅ Older mini or server-optimized models may be cheaper

- Deterministic personality tuning → ⚠️ Requires guardrail testing → ✅ Use stable prompt-tuned models

Practical Playbook:

- If low-latency streaming + multimodality is core, pilot GPT-4o.

- If reproducible high-precision symbolic reasoning is core, benchmark GPT-4 and Turbo variants.

- For batch ingest jobs with enormous token counts, consider cheaper throughput-optimized models.

Safety & Personality Mitigation

Multimodal models expand the attack surface: Images and audio can carry PII, adversarial artifacts, or forged media. Treat multimodal data with the same threat-model rigor used for high-risk NLP systems.

Multimodal Data Risks & Mitigations

- PII in images/voice: Run automated redaction and detection (face/license-plate detection, named-entity detection in ASR transcripts) before storing raw data. Consider on-device preprocessing for sensitive flows.

- Yes & transparency: Surface recording indicators require explicit consent and provide Actual deletion options.

- Encryption & entry control: Code media in transit and at rest, audit access logs, and minimize retention windows.

- Vision & overclaiming: Multimodal models can hallucinate details. For high-stakes domains (medical, legal), require human facts.

Personality & Persuasion Risk

- Models tuned for helpfulness can drift into persuasive or manipulative tones. Use explicit system prompts and guardrails, and test for modal persuasion vectors during red-team exercises.

Operational Safety

- Human review loops for risky actions.

- Minimal logging; Redact raw media unless essential.

- Red-team the system: Image attacks, voice spoofing, adversarial prompts.

Real-World GPT-4o Use Cases: Apps You Didn’t Expect

Here are practical mini case studies described in NLP/architectural terms.

Live Customer Support Assistant

- What: Agent ingests customer voice + screenshot and suggests troubleshooting steps.

- Why GPT-4o: One model fuses spoken complaint and visual context to produce coherent, contextualized remediation steps rapidly.

- Pattern: Client streams audio + uploads screenshots; backend queues to GPT-4o; UI displays candidate responses; human agent edits before sending.

In-car voice Agent

- What: Driver asks for directions while the agent inspects dashcam frames.

- Why GPT-4o: Low-latency streaming + image-context reasoning.

- Pattern: on-device microphone → edge prefilter (NLP preprocessing, VAD) → edge server → GPT-4o. Offline fallbacks are required for safety.

Tutoring App

- What: Student shows worksheet, asks verbally; model explains steps and points to errors.

- Why GPT-4o: Combined visual parsing + stepwise verbal explanation.

- Pattern: capture image, run OCR as preprocessing if high precision needed, stream audio, and request a structured explanation with numbered steps.

Accessibility Assistant

- What: Point a phone camera; the app narrates and answers Q&A via voice.

- Why GPT-4o: Realtime multimodal narration & follow-up Q&A.

- Pattern: Reduce data retention, provide immediate voice output, and allow the user to opt out of uploads.

Streaming Moderation Helper

- What: Monitor live stream segments for abuse and flag for human review.

- Why GPT-4o: Ability to reason across audio + visual context to reduce false positives.

- Pattern: Short sliding-window analysis with human escalation for high-confidence flags.

Each app requires tight safety checks, consent flows, and minimal logging.

Before You Launch GPT-4o: The Ultimate Checklist

- Prototype & benchmark: Small harness in one region; measure single-turn latency and tokens/sec.

- Multimodal flow testing: Test audio codecs, image sizes, and failure modes (blurry, occluded).

- Streaming UX: Partial token display, listening indicator, user stop/retry controls.

- Human handoff: Clear triggers for human escalation; implement queues.

- Privacy & consent: Consent UI, retention policy, opt-outs, and deletion APIs.

- Cost & fallback: Budget alerts and model-fallback rules to cheaper endpoints.

- Security & red teaming: Adversarial prompts, image Perturbations, voice spoofing tests.

- Observability: Instrument latency, tokens, error rates, and false positives; avoid raw PII in logs.

- Release gating: Soft launch to a small cohort; iterate on metrics before broad roll-out.

The Numbers That Reveal GPT-4o’s Real Speed

| test_name | model | first_token_ms | final_token_ms | tokens_per_sec | accuracy_pct | concurrency | notes |

| single_turn_cold | GPT-4o | 120 | 820 | 450 | 89% | 1 | region=us-east-1 |

| sustained_16_workers | GPT-4o | 95 | 1100 | 2200 | 88% | 16 | streaming enabled |

| multimodal_upload | GPT-4o | 450 (upload) | 1300 | 300 | 87% | 4 | 3MB image + 10s audio |

FAQs

A: Historically GPT-4o appeared in ChatGPT and the platform, but availability depends on OpenAI’s product tiers and updates. Always check the official pricing and model pages before shipping.

A: Public and independent tests report notably lower latency and higher throughput versus older GPT-4 variants on many interactive workloads, but results vary by prompt and must be verified with your own benchmarks.

A: Benchmark it. For some deep reasoning tasks, other model variants may be stronger — don’t assume new = best for every metric.

A: Yes — it’s designed for streaming multimodal interactions with audio + images + text. Implement partial streaming and robust fallbacks in production.

Conclusion

GPT-4o (GPT-4 Omni) moves sane real-time multimodal: it unifies text, audio, and vision in a single version that often reduces end-to-end latency and base overhead for interactive applications. Yet, it’s not a one-size-fits-all result. Behavior and adjustment are workload dependent; historic personality tuning and deal show the importance of monitoring alignment and UX drift. For management: pilot with reproducible harnesses, instrument latency and accuracy on domain text, implement human-in-the-loop for risky outputs, and publish your made and raw logs for trust. If you follow the reproducible benchmarking plan and the operational checklist above, you’ll be well equipped to choose the right model for your amount and to publish credible, reproducible results.