GPT-4o vs Gemini 2.5 Flash — The AI Battle You Can’t Ignore

GPT-4o vs Gemini 2.5 Flash — Confused which AI is faster, cheaper, or smarter? Discover how each model excels in real-world tasks, from multimodal chats to massive document analysis, and uncover surprising trade-offs that could save time, cost, and effort while boosting your AI performance in 2026. This dossier reframes the GPT-4 vs Gemini 2.5 Flash debate in precise natural language processing terms: GPT-4o vs Gemini 2.5 Flash focusing on representational substrates, sequence modeling behavior, context capacity, GPT-4o vs Gemini 2.5 Flash compute-efficiency tradeoffs, latency vs throughput engineering, and production cost models. GPT-4o vs Gemini 2.5 Flash the goal: give architects, ML engineers, product leads, and researchers a practical, evidence-driven roadmap for picking models by workload type and deployment envelope.

Why Choosing the Right AI Model Feels Overwhelming

- GPT-4o — a generalist, multimodal transformer optimized for interactive, low-latency user-facing experiences; developed by OpenAI.

- Gemini 2.5 Flash — a Flash-class model engineered for massive context, throughput, and cost-efficiency at scale; produced by Google DeepMind.

From an systems perspective, the difference is less “better vs worse” and more “design point A vs design point B”: one emphasizes integrated multimodal representations and turn-level responsiveness; GPT-4o vs Gemini 2.5 Flash the other maximizes linearized context horizon and batch throughput.

Multimodal vs Long-Context Workloads — What Matters Most

When architects decide between models, the decision variables are best expressed in primitives:

- Input representation: How tokens (text, audio, image patches) are embedded and fused.

- Array capacity: The size and effective discharge of the context window.

- Attention & lack mechanics: Whether full dense attention, sparse attention, locality-aware attention, or memory-compressed attention is used.

- Multimodal fusion: Unified encoders vs modality-specific towers + cross-attention.

- Inference strategy: streaming, chunked, or fully batched generation; quantized kernels; and sharding patterns.

- Operational metrics: Latency (time-to-first-token), throughput (tokens/sec), and cost-per-token (input/output).

- Robustness & instruction-following: Response reliability, hallucination rate, and controllability for constrained generation.

This article maps GPT-4o and Gemini 2.5 Flash onto these primitives, then gives actionable guidance.

What is GPT-4o?

GPT-4o is a multimodal transformer family that points out real-time, low-latency cooperation and unified image learning across modalities.

- Upgrade & input pipeline: GPT-4o mostly uses a subword tokenizer for text, a learned patch-embedding or various visual tokenizers for images, and cipher spectrogram tokens for audio. These modality-specific fixes are projected into a shared latent space so mind layers can operate over confused inputs.

- Unified Thought stack: rather than totally separate, make secret per modality, GPT-4o tends toward a unified transformer stack where method tokens co-attend. This supports tight cross-modal reasoning, because image and text tokens directly influence each attention layer’s output.

- Positional and temporal concel: for dialogue and audio, relative positional encodings and temporal-aware common help maintain local unity even across long chats.

- Instruction tuning and alignment: robust supervised and RL-based instruction tuning reduces adversarial hallucination and improves instruction following. This yields higher quality on zero-shot conversational tasks and interactive code explanation use-cases.

- Inference behavior: primed for quick time-to-first-token via optimized kernels, adaptive batching, and faster decoding loops — making it feel “snappy” in chat UIs and voice interfaces.

NLP implications: GPT-4o is excellent when you require a single model to fuse visual, audio, and textual information and produce context-aware responses quickly. It’s optimized for turn-based interaction and excels at instruction and dialog-style tasks where latency and multimodal grounding matter.

What is Gemini 2.5 Flash?

Gemini 2.5 Flash is architected for scale: extremely large linearized context windows, high token throughput, and cost-efficient inference. From an NLP systems view:

- Token capacity & memory: core emphasis on extending context to near 1,000,000 tokens via context-compression algorithms (e.g., hierarchical attention, chunking + compressed representations, or external differentiable memory). This allows entire documents, books, and codebases to be input as a single conditioning stream.

- Efficient attention: Flash variants implement sparse attention patterns, locality-sensitive hashing attention, and multi-query attention optimizations to reduce quadratic memory and compute overhead.

- Streaming & batching: optimized for batched, high-concurrency workloads where many long requests are processed in parallel with high amortization of warm-up costs.

- Model quantization & kernel optimization: heavy use of lower-bit quantization, custom matmul kernels, and CPU/GPU/TPU-specific optimizations to lower inference cost per token.

- Modality approach: while capable of multimodal input, Flash’s primary value proposition is handling very long sequences and high throughput; multimodal integration is typically more modular or shallower compared to architectures built around unified fusion.

NLP implications: Gemini Flash is a go-to when your workload involves book-length summarization, whole-repository code analysis, or legal contract batch processing, where feeding everything into one conditioning context yields better coherence and fewer stitching errors.

Key characteristics — Distilled into Tradeoffs

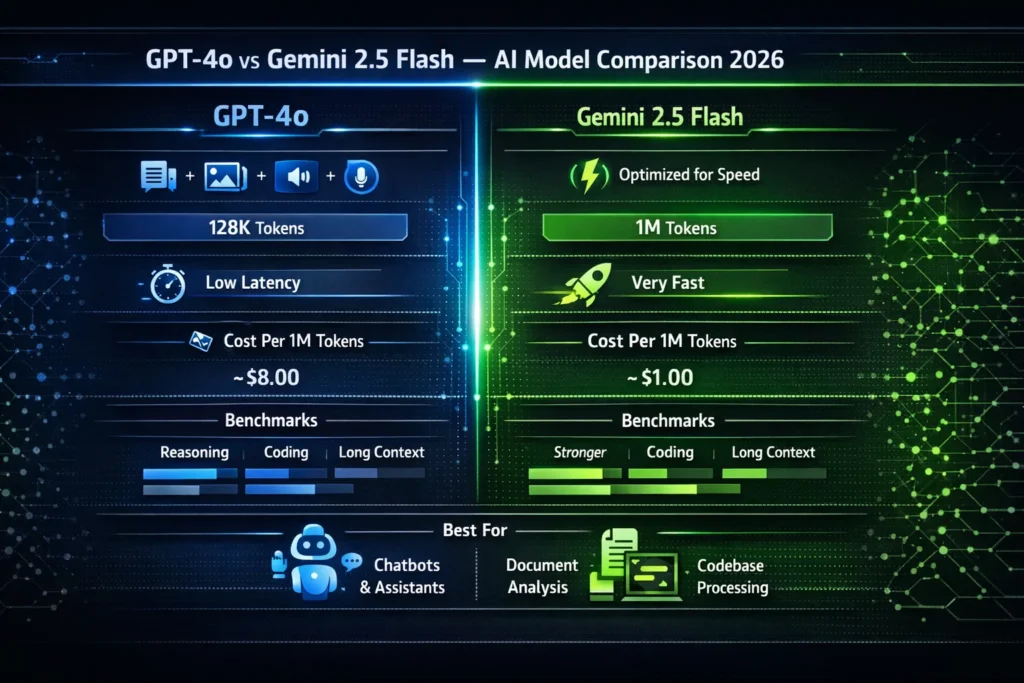

| NLP Primitive | GPT-4o | Gemini 2.5 Flash |

| Primary design target | Multimodal fusion, responsiveness | Massive context & throughput |

| Typical attention motif | Dense unified attention across modalities | Sparse/optimized attention, context compression |

| Best for | Conversation, multimodal Q&A, instruction following | Long-document understanding, batch processing |

| Inference latency | Low TTF (time-to-first-token) | Very fast per-token throughput; TTF may vary |

| Cost per token | Higher (per-token premium for fused multimodality) | Lower (economies at scale, optimized kernels) |

| Context window | ~128k tokens (effective) | Up to ~1M tokens (engineering dependent) |

Architecture comparison — “Omni” vs “Flash” in technical terms

Representation & Fusion

- GPT-4o (“Omni”): Emphasizes a single shared latent space where embeddings from disparate modalities are interleaved and processed by the same attention layers. This supports fine-grained grounding and precise cross-modal co-reference resolution (e.g., linking a phrase in speech to a region in an image).

- Gemini Flash: Often uses modality-specific frontend encoders and then applies cross-attention or lightweight fusion layers. The engineering choice favors decoupling to optimize each pipeline for throughput, but cross-modal depth may be less integrated layer-by-layer.

Attention & Scaling

- GPT-4o prioritizes dense attention for high-fidelity cross-modal interactions. Gemini Flash uses attention approximations, compression, and hierarchical memory so that the effective context grows without quadratic blowup. From an NLP standpoint: Flash trades some fine-grained layerwise cross-attention richness for vastly larger context and reduced compute per token.

Memory & Retrieval Strategies

- RAG and Retrieval Augmentation: Both families benefit from retrieval-augmented generation. Flash’s huge context window reduces the need to fetch and stitch snippets; GPT-4o’s real-time constraints encourage lightweight retrieval or cached embeddings to maintain low latency.

- Long-term memory: Gemini Flash is more natural for snapshotting entire corpora into a single conditioning context. GPT-4o fits architectures with episodic short-term context plus retrieval-backed long-term store.

Quantization & Compression

- GPT-4 often uses mixed precision and careful quantization to preserve multimodal fidelity. Gemini Flash pushes aggressively on quantization (4-bit, 3-bit research modes) and context compression (summarize-then-encode, hierarchical indexing) to hit cost targets.

Context window: Why 1M tokens Matter

The “context window” is essentially the model’s read-only working set per inference. In NLP terms:

- Large windows allow the model to model long-range dependencies without chunking, which reduces boundary artifacts, context stitching errors, and inconsistency introduced by summarization heuristics.

- A 1M-Token window is meaningful for use cases like:

- Whole-codebase static analysis

- Book-length summarization where the model can attend to exact lines and paragraphs

- End-to-end legal contract negotiation where clauses reference each other across the document

But raw context size is not the only metric — effective utilization matters. Gemini Flash uses compression and hierarchical attention to make a 1M-window actionable; GPT-4o uses efficient management and smarter chunking for tasks where shorter windows suffice.

Benchmark performance — what Metrics Matter in?

Benchmarks quantify model ability across axes that matter in applied NLP:

- Reasoning: chain-of-thought style tasks, multi-hop questions. GPT-4 often excels due to instruction tuning and better-calibrated generative reasoning.

- Coding & program understanding: both models perform well on large-code tasks; Gemini Flash’s advantage is holistic repository awareness, while GPT-4o often yields better interactive debugging dialogue.

- Reading comprehension: for short to medium documents, GPT-4o has strong performance. For book-length comprehension, Gemini Flash’s long horizon is advantageous.

- Multimodal tasks: GPT-4o typically dominates when the task requires fluent cross-modal interpretation (image+text+audio in a single flow).

Remember: benchmarks are proxies. Choose the metric that aligns with your production objective (e.g., latency, hallucination rate, or recall on legal clauses), not just headline leaderboard placement.

Speed and Latency — Engineering for UX vs Throughput

Two distinct operational goals:

- User-facing interactive systems — prioritize low time-to-first-token and smooth turn-based responses. Optimizations: small-batch decoding, pre-warmed sessions, and lower-latency serving nodes (edge TPU/GPU). GPT-4o targets this profile.

- Backend/analytical pipelines — prioritize tokens/sec and cost per token. Optimizations: large batched runs, quantized kernels, streaming generation. Gemini Flash targets this.

In practical terms:

- For chat UIs and voice assistants, pick the model with lower observed TTF and stable per-token latency under typical load. GPT-4o is engineered for this pattern.

- For corpus processing at scale, measure end-to-end throughput (including I/O, pre/post-processing). Gemini Flash will generally win on throughput and cost.

Pricing & cost Estimation

Token-based accounting remains common. For a representative cost comparison (example numbers to reason with — verify against live pricing):

- GPT-4o: input and output priced at a premium per token due to multimodal processing and lower amortized throughput.

- Gemini 2.5 Flash: lower per-token cost thanks to optimized kernels and batch economies.

Example monthly scenario (engineer these to your workload):

- 1M input tokens/day + 1M output tokens/day → 30M input + 30M output monthly.

Rough cost illustration (hypothetical figures for planning):

- GPT-4o: higher per-token rate → monthly sum significantly greater for heavy throughput use. Better when the UX value per token is high.

- Gemini Flash: lower per-token rate → better when raw token volume is the dominant cost driver.

NLP-economic recommendation: benchmark with real production data (distribution of input lengths, frequency of multimodal inputs) and simulate token mix to estimate costs precisely.

Real-world use cases

Use case: Intelligent codebase assistant

- Gemini 2.5 Flash: can load and analyze entire repositories in one pass; ideal for large-scale static analysis, dependency graphs, and global refactoring suggestions.

- GPT-4o: excels at interactive pair programming, explaining snippets, generating examples, and conversational debugging.

Use case: Customer support with multimodal inputs

- GPT-4o: receives screenshots, logs, audio messages, and produces grounded, immediate responses with suggested fixes or troubleshooting steps.

- Gemini Flash: can analyze volumes of historical tickets to compute patterns and summarize multi-month trends efficiently.

Legal contract review

- Gemini Flash: can ingest whole contract portfolios and surface cross-references and contradiction detection.

- GPT-4o: good at answering clause-specific questions and drafting human-readable explanations.

Research summarization and literature review

- Gemini Flash: ingest many papers and produce single-pass meta-summaries with citation-aware extraction.

- GPT-4o: ideal for iterative interactive exploration (e.g., “explain methods in lay terms”, then follow-up clarifications).

Hybrid Architectures — Best of Both Worlds

A common pattern: combine models to leverage complementary strengths. Typical hybrid design:

- Frontend (interactive): User-facing chat powered by GPT-4o for instant, multimodal replies.

- Backend (heavy lifting): Gemini Flash runs batch analytics, creates compressed summaries or indices, and stores them in vector databases or RAG pipelines.

- Orchestration: A routing layer decides by request type — short interactive query → GPT-4o; long-document ingest or whole-repo analysis → Gemini Flash.

- Cache & indexing: Store long-context compressed summaries produced by Flash, then let GPT-4o query the index for low-latency retrieval and explanation.

This hybrid approach minimizes cost while maximizing UX and capacity.

Implementation tips for ML Engineers

- Measure token distribution: Instrument your client to capture the token histogram (input & output). Use this to estimate costs and choose batching strategies.

- Adaptive chunking & summarization: For models with smaller windows, implement hierarchical chunking with learned summarizers to preserve semantic fidelity.

- Use semantic retrieval: Vector similarity search + reranking reduces token usage by narrowing the scope before sending to the model.

- Monitor hallucination & calibration: Track factuality on critical tasks; consider constraint mechanisms (extraction-first then generative explanation).

- Optimize latency: Pre-warm sessions, pin critical endpoints on low-latency hardware, and use streaming responses for progressive UX.

- Quantization & distillation: Evaluate lower-bit quantized inference for batch tasks; consider teacher-student distillation for specialized, smaller models when latency and cost are paramount.

- Security & privacy: When handling regulated data, push processing to enterprise variants with regional data residency and contractual guarantees.

EU & Global Deployment — Compliance and Operational Guards

When deploying across regions, consider:

- Data residency: Check model provider enterprise contracts for regional deployment options and logs retention policies.

- GDPR & explainability: Expose mechanisms for users to request explanation or deletion of outputs where applicable.

- Model governance: Maintain model cards, evaluation results on safety tests, and an incident response plan for model failures.

FAQs

A: Not always. GPT-4o excels at multimodal, low-latency tasks. Gemini Flash shines on huge documents or codebases with lower cost.

A: Yes, Flash variants can handle up to 1M tokens using attention and memory optimizations, though real performance depends on data type and processing.

A: Gemini Flash is usually cheaper per token at scale. GPT-4o costs more due to multimodal processing and low-latency optimizations.

A: Gemini Flash is best for full repo analysis. GPT-4o is better for interactive coding, debugging, and explanations.

A: Yes. Combine GPT-4o for interactive queries and Gemini Flash for long-context processing to save costs and boost performance.

Deep-Dive — Mechanisms Behind the Numbers

Tokenization and its downstream impact

Tokenization choices affect effective context utilization. Subword models reduce token counts for repeated vocabulary but may inflate counts for rare tokens. For long-document tasks, prefer vocabularies and tokenization schemes that compress natural language without losing structural fidelity (e.g., byte-level BPE variants tuned for source code when processing codebases).

Attention compression & hierarchy

Scaling to million-token contexts requires attention to rethinking:

- Local + global attention: attend densely to nearby and sparsely to distant contexts.

- Compressed memory vectors: summarize chunks into compact vectors that the model can attend to instead of raw tokens.

- Recurrence & sliding windows: maintain a rolling state with compressed snapshots to capture long-range dependencies.

These techniques reduce compute while preserving cross-chunk coherence.

Retrieval as “soft memory.”

For both models, retrieval-augmented generation (RAG) remains critical. Build semantic indices and use hybrid retrieval (dense + lexical) to ensure high recall. Flash’s long windows reduce the frequency of retrieval but may still benefit from retrieval for freshness and external grounding.

Practical evaluation plan — how to choose in 6 steps

- Define core tasks: conversation, summarization, code analysis, multimodal QA, etc.

- Collect representative data: sample real inputs and expected outputs.

- Run side-by-side tests: measure latency, throughput, accuracy (task-specific metrics), and hallucination rates.

- Estimate cost: compute token mix and pricing scenarios for both models.

- Simulate production load: include concurrency, cold starts, and caching.

- Decide hybridization: map request types to the optimal model based on measured metrics.

Risk & Mitigation

- Hallucination: Mitigate with retrieval, constrained decoding, and post-hoc verification pipelines.

- Data leakage: Use enterprise offerings with contractual guarantees.

- Model updates: Version and pin model variants to avoid drift; maintain canaries and A/B tests when upgrading.

- Cost overruns: Implement throttling, quota enforcement, and cost alarms.

Example architecture patterns

Pattern A: Interactive AI assistant (low-latency)

- Edge load balancer → GPT-4o service for live responses

- Vector DB for short-term session context (cached)

- Flash backend runs nightly book- or repo-level analysis and populates the index

Pattern B: Analytical pipeline (high-volume)

- Bulk ingestion → Gemini Flash batch jobs with quantized inference → compressed summaries into datastore → GPT-4o serves explanations on top of summaries

Conclusion — Which AI Model Should You Actually Choose?

- If your product hinges on user experience, multimodal understanding, and immediate feedback loops → GPT-4o is the primary candidate.

- If your workload is dominated by very long context and bulk processing, where per-token economics matter → Gemini 2.5 Flash is likely more cost-effective.

- For most production systems that need both, adopt a hybrid, using Flash for index-building and heavy analysis while keeping GPT-4o as the interactive explanation and UX surface.