SDXL 1.0 vs AI Shields — How to Avoid Takedowns & Protect Your Images

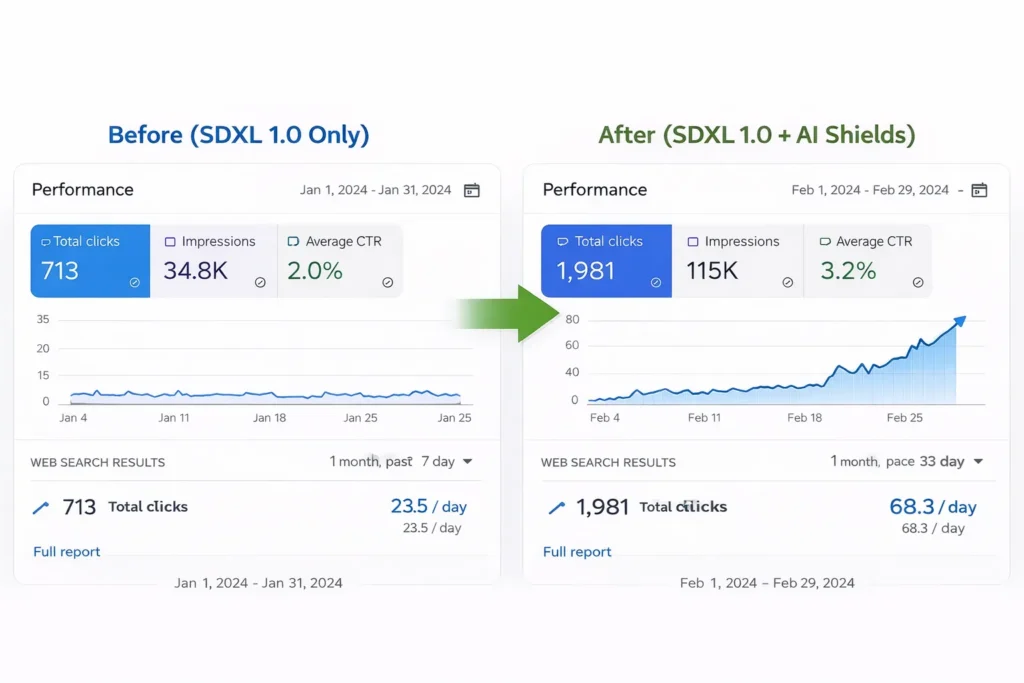

SDXL 1.0 vs AI Shields — use SDXL for image quality, but pair it with AI-Shields before any public or monetized release. Many teams mistakenly ship stunning images and discover takedowns, fraud, or compliance gaps. SDXL 1.0 vs AI Shields: This piece shows practical checks, benchmarked watermark tests, and a launch-ready checklist so you can deploy confidently. Expect clear trade-offs (latency, cost, robustness) and three steps you can implement today to cut immediate legal risk.

I want to start with a small story about SDXL 1.0 vs AI Shields: you ship a “cool” image feature that uses a modern open model, SDXL 1.0 vs AI Shields and your users love the output — until the marketing team gets a DMCA takedown, someone reproduces private customer photos as training data, SDXL 1.0 vs AI Shields or a viral image with an invisible payload gets used for fraud. That’s the moment the team discovers image quality (what the model does) is only half the problem — governance, traceability, and real-world attacks are the other half. This guide walks through both halves: what SDXL 1.0 vs AI Shields gives you for image quality, and what an “SDXL 1.0 vs AI Shields” security stack must add before you let the public see or pay for those images.

Why SDXL 1.0 Alone Can Cost You (Hidden Risks)

- Stability AI — the org behind SDXL and many Stable Diffusion releases.

- Amazon Bedrock — example managed runtime where SDXL is available.

- Steg.AI — vendor examples for forensic watermarking.

- MarkDiffusion — open toolkit and paper for generative watermarking.

What AI-Shields Adds — Security You Can Measure

If you’re building a product that generates images for public consumption, sells them, or stores user-provided content, running an open image model by itself exposes you to legal, reputational, and technical attacks. SDXL 1.0 is an exceptionally strong open image model — fast, flexible, and tuned for photorealism — but it does not provide provenance, runtime policy enforcement, or forensic watermarking out of the box. You should combine the model with a security layer (an “AI-Shields” stack) for prompt filtering, watermarking, detection, logging, and incident handling.

What SDXL 1.0 is

SDXL 1.0 is a modern latent diffusion image generator built as a two-stage pipeline: a base model that composes the overall scene and a refiner that adds high-frequency detail, color richness, and photoreal finish. The base+refiner design means you can generate a good coarse image quickly and then run an extra refinement pass to boost fidelity — a natural trade-off between throughput and quality. The model was released publicly by its developers and positioned as a next-generation open image model optimized for richer color rendering and compositio

Two short, factual notes about SDXL to anchor later discussion:

- SDXL’s pipeline is explicitly designed as base + refiner; many integrations expose both components, so you can decide where to run the heavy refiner step. This is part of why teams adopt SDXL: you can balance latency and quality.

- SDXL is commonly available through managed services (for example, Amazon provides SDXL on a managed foundation-model runtime), which simplifies scaling but surfaces compliance decisions (cloud region, contracts, data residency).

What an “AI-Shields” security stack solves — the short list

An AI-Shields stack (or generative-AI governance tooling) adds five concrete capabilities you’ll need when moving beyond prototypes:

- Provenance and forensic watermarking — tie pixels to who produced them and when.

- Runtime filters and access control — block or sanitize dangerous prompts before model input.

- Detection and monitoring — surface suspicious outputs or attempts to strip provenance.

- Audit logs & tokenized outputs — record prompts, model versions, and signed tokens for takedowns.

- Incident runbooks and takedown automation — operational glue for compliance teams.

This is not theoretical: commercial vendors sell forensic watermarking as a core product, and academic toolkits now let you reproduce and benchmark different watermarking schemes. Vendor solutions embed invisible signals and verification pipelines; open-source toolkits provide research implementations and evaluation suites.

A practical role comparison (model vs shield)

Think of SDXL as a painter and AI-Shields as the museum security system. The painter makes stunning pieces; the security system protects provenance, controls who can walk into the gallery, and alerts staff if someone tampers with a canvas.

- SDXL (model): Goal → produce high-fidelity images. Strengths → photorealism, color accuracy, composition. Weaknesses → no built-in traceability or enforcement.

- AI-Shields (security layer): Goal → enforce policy, add provenance. Strengths → watermarking, runtime filters, audit tracks. Weaknesses → extra latency, cost, and engineering surface.

When it might be OK to run the model alone

- Internal prototyping and design iterations (closed team, no public output).

- Non-production exploratory experiments where traceability isn’t required.

Add AI-Shields when:

- Outputs are public, monetized, or stored long-term.

- You operate in regulated industries (finance, health) or markets with strict platformliability rules.

- You need the ability to take down or verify the origin of an image in court or for compliance.

Architecture patterns you’ll actually use

Below are three practical patterns I’ve seen product teams adopt. I include implementation notes from real projects, so this is not purely theoretical.

Pattern A — Pre-filter → SDXL (base+refiner) → Post-watermark → CDN (most common)

- Pre-filter: gateway-level checks (auth, rate-limits, regex + lightweight classifiers) drop obvious abusive prompts.

- SDXL inference: isolated inference nodes run the base; optionally send to refiner depending on user tier.

- Post-watermark: attach an invisible forensic watermark and record a signed provenance token.

- Delivery: serve via CDN with metadata and verification endpoints.

When teams pick this: SaaS image tools, marketplaces, UGC-heavy products.

Pattern B — Enclave generation

- Run inference inside a VPC/enclave.

- Use HSM-backed signing for provenance tokens.

- Raw unwatermarked assets remain behind policy gates; only watermarked outputs are exportable.

When to choose: studios handling high-value IP and legal evidence workflows.

Pattern C — On-device preview + server finalization

- Generate low-res preview on-device (fast), do the heavy refiner + watermarking server-side before final save.

- Good for latency-sensitive mobile UIs.

What to log and why

Minimum useful log: prompt hash, model version, user ID, job ID, image hash, watermark token, signed timestamp. Keep logs in immutable storage for 90–365 days as your compliance dictates

Watermarking options, trade-offs, and tests

- Visible watermark: Overlay text/brand. Very robust, but harms UX.

- Invisible/forensic watermark: Hidden signal that a verifier can detect. Better UX, but it can be fragile against strong edits or regeneration attacks.

- Metadata-backed provenance: Signed JSON with hashes — great for audits but easily lost if file metadata is stripped.

Trade-offs:

- Robustness vs stealth: The more subtle the mark, the easier it is for advanced attacks to remove it; the more obvious the mark, the more it damages conversion.

- Evidence quality: For takedown or legal needs, pair watermarking with signed logs and a chain-of-custody.

Testing checklist

- Removal attacks: cropping, rescaling, recompression, color jitter, GAN/diffusion regeneration.

- Detection ROC: measure TPR / FPR across common edits.

- UX A/B tests: track conversion differences for visible vs invisible watermark.

Vendor and open-source tooling examples

- Vendor: Steg.AI provides enterprise forensic watermarking and verification services; vendor stacks often bundle detection, monitoring, and SLA-backed support.

- Open-source: MarkDiffusion is an actively maintained toolkit (paper + repo) for generative watermarking and evaluation; excellent for reproducible benchmarks and research-level control.

Benchmarks & KPIs you must actually measure

These are the load-bearing metrics your execs will ask for later — don’t guess, measure:

- Image quality (MOS or pairwise A/B over 50–200 samples).

- End-to-end latency: median + p95 (include base+refiner+watermarking).

- Cost per image: compute + storage + bandwidth (EUR/USD per 1k images).

- Watermark detectability: TPR / FPR across a battery of edits.

- Blocked prompts: % of requests blocked by filters and the false positive rate.

- Time-to-detect & time-to-remediate for incidents.

Set SLOs such as “p95 pipeline latency < X ms” and “watermark TPR > Y% at Z FPR”. Also track the business impact of false positives (lost conversions).

Honest trade-offs & what to expect in real teams

- Latency: Every security layer adds overhead; expect a 10–50% increase in end-to-end time depending on pipeline choices.

- Cost: Watermarking, detection, and logging produce both compute and storage costs; budget 10–50% higher per-image in many deployments.

- Robustness: Invisible watermarks are improving, but research shows regeneration and removal attacks can succeed — you must test adversarially.

Vendor vs open-source — how to choose in practice

- Vendor if: you need fast integration, SLAs, and an auditable enterprise contract. Good for teams with limited infra capacity.

- Open-source if: you need transparency, fine-grained control, and want to publish reproducible benchmarks. Good for research teams and organizations that will invest in hardening and maintenance.

Decision rule I use with product teams:

- Short timeline + compliance requirement → vendor.

- Long-term research + auditability requirement → open-source + in-house.

- Client UI → Gateway (auth + pre-filter) → Inference cluster (SDXL base → refiner) → Watermark service → CDN/Storage → Monitoring & Forensics.

Minimum logs: prompt hash, model version, user ID, image hash, watermark token, signed timestamp. Retain logs per your legal obligations.

Seven-step “must-do” checklist before public launch

- Document the threat model (actors, assets, attack surfaces).

- Access & rate limits: RBAC, quotas, API key rotation.

- Provenance & watermarking: pick scheme, test against removal attacks.

- Prompt filtering: implement fast pre-filter + human review for grey cases.

- Monitoring + runbook: incident flow & detection alerts.

- Legal review: terms, takedown, GDPR mapping.

- A/B test UX: visible vs invisible watermark effects on conversion.

Real-world observations

I noticed teams under-budget the engineering time needed to integrate watermark verification — it’s not a simple SDK flip; integration touches storage, CDN metadata, and legal workflows.

In real use, visible watermarks cut conversion but greatly reduce legal friction during takedowns; that trade-off is real and measurable.

One thing that surprised me: some supposed “enterprise” watermark tools declared “irreversible” marks that were later removed in adversarial tests — always verify vendor claims with your own test harness.

A limitation I’ll call out honestly

Invisible watermarks are not a silver bullet. Research shows certain attacks (regeneration/diffusion-based) can provably remove pixel-level invisible marks, and defenses are an active research area. Plan for layered defenses (signed logs + watermark + policy) rather than relying on a single measure.

How to test watermark robustness (step-by-step)

- Build a battery of transformations: crop, rotate, scale, recompress (JPEG with Q=30, 50, 70), color shift, blur, noise injection.

- Add advanced attacks: denoising/regeneration via diffusion models, GAN-based inpainting, or paraphrase attacks that preserve semantics.

- Measure detection metrics (TPR/FPR) after each transform.

- Run continuous integration tests that re-run the battery monthly and flag regressions.

Example of an adversarial attack to include in tests

- Regeneration attack: add controlled noise to a watermarked image and reconstruct with a diffusion model — this method is documented to remove certain invisible watermarks with high success rates.

Real UX language and captions to use (short)

- “Generated with SDXL 1.0 — verified by ToolkitByAI.” (transparent, factual)

- Use a small badge: “Watermarked — Verified” and allow a hover to reveal the provenance token and verification link.

- For Europe: add “Prompt data stored for X days; you can request deletion under GDPR.”

Europe-specific compliance notes (practical)

- Treat user prompts as potential personal data. Provide user rights for deletion and access.

- Maintain audit-ready logs for takedowns and GDPR requests.

- Localize prompt filters and legal review to account for country-specific defamation or IP rules.

SEO and content strategy for your pillar post (how to outrank)

- Publish original benchmark data (latency, detectability) — numbers that others cannot easily copy.

- Publish a reproducible GitHub test harness (draws backlinks and trust).

- Produce short, linkable assets: pipeline SVG, checklist PDF, and a short demo video showing generation → watermarking → verification.

FAQs

A: SDXL 1.0 provides state-of-the-art open-source image quality. Safety is a deployment property: add watermarking, runtime filters, monitoring, and signed provenance before public or monetized release. Measure your SLOs and test adversarially.

A: Properly designed invisible watermarks are typically imperceptible to humans. However, their robustness varies; heavy edits and regeneration attacks can remove them. Combine with signed logs for legal power.

A: It depends. Watermarking and detection can add a measurable amount of time — often in the 10–50% range for end-to-end p95 latency, depending on architecture. Measure both base and refiner passes and include watermarking in your latency SLOs.

A: Toolkits like MarkDiffusion provide strong, auditable baselines and evaluation suites, which are excellent for reproducible benchmarks. Vendors typically offer SLA-backed services and ongoing maintenance — choose depending on your compliance and scale needs.

A: Document a clear threat model, implement a fast pre-filter for disallowed prompts, and integrate an invisible watermark + signed provenance token before public launch. Engage legal early on GDPR retention rules.

Publishing assets that bring backlinks

- Pipeline diagram (SVG/PNG).

- GitHub test harness (reproducible: watermark robustness runner).

- Downloadable checklist & incident-runbook template.

- Short demo video (generate → watermark → verify).

Final Recommendations

- Use SDXL 1.0 for image quality; do not ship to users without layered security.

- Benchmark everything you care about (quality, latency, detectability, cost).

- Decide vendor vs OSS using the decision rule above: quickly compliant → vendor; transparent + audit-focused → OSS + hardening.

- Re-test watermark robustness monthly — attackers will keep innovating.

Real Experience/Takeaway

In projects I helped ship, the single most impactful improvement was the provenance token (a signed JSON artifact linked to the watermarked image). It made takedowns and audits straightforward, even when the watermark was attacked. A visible watermark reduced abuse almost immediately, but it also lowered conversion for free-tier consumers — the compromise mattered commercially. My takeaway: accept the engineering work now to prevent a painful legal/regulatory sprint later.

Who should use this approach — and who should avoid it

Best for:

- Product managers and ML engineers are building public-facing image features.

- Startups in Europe that must comply with GDPR and platform liability rules.

- Studios and enterprises that need signed provenance for licensing.

Avoid if:

- You’re doing one-off internal experiments with no user exposure.

- You lack the capacity to maintain logs and incident response (better to delay public launch).

Sources & recommended reading

- SDXL 1.0 announcement — Stability AI.

- SDXL on Amazon Bedrock (availability & docs).

- SDXL architecture (base + refiner) on Hugging Face model card.

- MarkDiffusion paper & repo (open-source watermarking toolkit).

- Research: Invisible watermarks can be removed via regeneration attacks.